One thing NVIDIA has been great at for several generations is ensuring that there is a product for every budget. The 3090 is your pro level card, delivering a ton of power and memory to go with it. The 3080 is the flagship consumer product, as you can see in our review. We liked the 3070 quite a bit as well (our review), noting that it really hit a sweet spot for power to performance. Now we see the launch of the GeForce RTX 3060 Ti at $399, a brand new price point for the entry market. Let’s get this slotted and see where it lands in our benchmarks.

There are a lot of similarities between the GeForce RTX 3070 and the GeForce RTX 3060 Ti, but this card is miles away from the flagship 3080. As such, there will be a lot of comparison with the former and less with the latter. Just like the 3070, the RTX 3060 Ti measures 9.5” in length and 4.4” width, taking up two slots, versus the 3080 which measures 11.2” and 4.4”. Once again it uses the single 8-pin proprietary connector, but where the 3070 requires a 650W PSU, the 3060 Ti only needs a 500W power supply. Just as we saw on the 3070, there is only a small adjustment to the flow-through construction solution we saw on the 3080 and 3090. In fact, other than a shiny silver body, the card is indistinguishable from its 3070 counterpart…at least on the outside.

Just like in our 3080 and 3070 reviews, I want to cover some jargon to ensure you have a clear picture of the hardware under the hood in the GeForce RTX 3060 Ti. Just like in the 3070, the GeForce RTX 3060 Ti uses GDDR6 instead of the GDDR6-X, and also sports 8GB of it. Unlike the 3070, which is clearly positioned as a 4K-capable card, the 3060 Ti is squarely aimed at the 1440p space, making 8GB entirely appropriate.

The more pixels your graphics card pushes, the more VRAM you need. Features like Ray Tracing and anti-aliasing work the card hard, as do higher refresh rates and resolutions. Refresh rates, put simply, is the redrawing of the scene however many times per second (e.g. 60 times a second versus 30 times a second), and doing that a 4K resolution versus 1080p means drawing four times the pixels. Let’s talk about the processor in this card before we dig into the numbers.

The GeForce RTX 3080 runs on the GA102 processor, sporting over 28 million transistors with a shockingly small die size of 628 mm² with 10752 shading units, 336 texture mapping units, 112 render output units, 336 tensor cores, and 84 ray tracing acceleration cores. That GA102 GPU is what powers the RTX 3080 and RTX 3090, and it’s truly bleeding edge. The RTX 3070 and RTX 3060 Ti use a GA104 GPU which is a larger processor using a 392 mm² with 17,400 million transistors. While it does have less texture mapping units, acceleration cores, and and shading units, it also comes in at a far lower cost. In fact, the 3060 Ti launches at a price of just $399 versus the $799 of the 3080 and $499 of the 3070.

If you are a number cruncher, strap in because there’s about to be a lot of them as we talk about what’s under the hood of the RTX 3060 Ti. The 3070 shipped with a base clock of 1500 MHz, with the 3060 Ti coming in at 1410. Similarly, the boost clocks for both cards are 1715 and 1665, respectively. Both cards have the same memory clock of 1750 MHz, with a 14 Gbps effective throughput. The 3070 has 96 raster operation pipelines (ROPs), which helps perform anti-aliasing and provides the adjusted image to the framebuffer, and the 3060 Ti slims that to 80. TMUs are Texture Mapping Units and they manipulate the bitmap image and place it in the environment; the 3070 has 184 of these, whereas the 3060 Ti cuts back to 152. Finally, the 3070 has 5888 cores for processing all of the graphics and features, and the 3060 slims back to 4864. That’s a lot of numbers, but what does it all add up to in the end? Let’s dig into the rest of the tech to better answer that question.

What is an RT Core?

Arguably one of the most misunderstood aspects of the RTX series is the RT core. This core is a dedicated pipeline to the streaming multiprocessor (SM) where light rays and triangle intersections are calculated. Put simply, it’s the math unit that makes realtime lighting work and look its best. Multiple SMs and RT cores work together to interleave the instructions, processing them concurrently, allowing the processing of a multitude of light sources intersecting with the objects in the environment in multiple ways, all at the same time. In practical terms, it means a team of graphical artists and designers don’t have to “hand-place” lighting and shadows, and then adjust the scene based on light intersection and intensity — with RTX, they can simply place the light source in the scene and let the card do the work. I’m oversimplifying it, for sure, but that’s the general idea.

The Turing architecture cards (the 20X0 series) were the first implementations of this dedicated RT core technology. The 2080 Ti had 72 RT cores, delivering 29.9 Teraflops of throughput, whereas the RTX 3080 has 68 2nd-gen RT cores with 2x the throughput of the Turing-based cards, delivering 58 Teraflops of RTX power, and the 3070 shipped with 47 of them to dish out 40 Teraflops of shadow-processing, real-time ray tracing, shading, and compute power. The RTX 3060 Ti comes to us with 38 RT Cores, providing 32.4 Teraflops — amazingly beating out the 2080 Ti using only half the cores!

What is a Tensor Core?

Here’s another example of “but why do I need it?” within GPU architecture — the Tensor Core. This relatively new technology from NVIDIA had seen wider use in high performance supercomputing or data centers before finally arriving on consumer-focused cards in the latter and more powerful 20X0 series cards. Now, with the RTX 30X0 series of cards we have the third generation of these processors. The 2080 Ti had 240 second-gen cores, the 3080 has 272 third-gen Tensor cores, the 3070 comes with 184 of them, and the RTX 3060 Ti has 152. Don’t fall into the trap that more is better though — the third generation of tensor cores are double the speed of their predecessor. That’s great and all — but what do they do?

Tensor cores are used for AI-driven learning, and we see this more directly applied to gaming via DLSS, or Deep Learning Super Sampling. More than marketing buzzwords, DLSS can take a few frames, analyze them, and then generate a “perfect frame” by interpreting the results using AI, with help from supercomputers back at NVIDIA HQ. The second pass through the process uses what it learned about aliasing in the first pass and then “fills in” what it believes to be more accurate pixels, resulting in a cleaner image that can be rendered even faster. Amazingly, the results can actually be cleaner than the original image, especially at lower resolutions, and having less to process means more frames can be rendered using the power saved. It’s literally free frames. DLSS 3.0 is still swirling in the wind, but very soon we may see this applied more broadly than it is today. We’ll have to keep our eyes peeled for that one, but when it does release, these Tensor cores are the components to do the work. That’s all fancy, but wouldn’t you rather see it in action? Here’s a quick snippet from Control that does exactly that.

DLSS 2.0 was introduced in March of 2020, and it took the principals of DLSS and set out to resolve the complaints users and developers had, while improving the speed. To that end, they reengineered the Tensor core pipeline, effectively doubling the speed while still maintaining the image quality of the original, or even sharpening it to the point where it looks better than the source! For the user community, NVIDIA exposed the controls to DLSS, providing three modes to choose from — Performance for maximum framerate, Balanced, and Quality which looks to deliver the best quality final resultant image. Developers saw the biggest boon with DLSS 2.0 as they were given a universal AI training network. Instead of having to train each game and each frame, DLSS 2.0 uses a library of non-game-specific parameters to improve graphics and performance, meaning that the technology could be applied to any game should the developer choose to do so. Game development cycles being what they are, and with the tech only hitting the street earlier this year, it’s likely we’ll see more use of DLSS 2.0 during the holiday blitz, and even more after the turn of the year.

Frametime vs. Framerate:

It’s important to understand that these two terms are not in any way interchangeable. Framerate tells you how many frames are rendered each second, and Frametime tells you how long it took to render each frame. While there is a great deal of focus on framerate and the resolution at which it’s rendered, frametime should likely receive equal if not greater attention. When frames take too long to render they can be dropped or desync, wreaking all sorts of havoc including stuttering. If frame 1 takes 17ms, but frame 2 takes 240ms, that’s going to make for a jittery result. Realize that both are important and don’t become myopically focused on just framerate as it only tells half the story – even if a device is capable of delivering 144fps, if it does so in an uneven fashion, you’ll see a choppy output.

What is RTX IO?

Right now, whether it’s on PC or consoles, there is a flow of data that is largely inefficient and our games suffer for it. Storage platforms deliver the goods across the PCI bus, to the CPU and into system memory where they are decompressed. Those decompressed textures are then passed back across the PCI bus to the GPU which then hands it off to the GPU memory. Once that’s done, it is then passed to your eyeballs via your monitor. Microsoft has a new storage API called DirectStorage that allows NVMe SSDs to bypass this process. Combined with NVIDIA RTX IO, the compressed textures instead go from the high-speed storage across the PCIe bus and directly to the GPU. Assets are then decompressed by the far-faster GPU and delivered immediately to your waiting monitor. Cutting out this back-and-forth business frees up power that could be used elsewhere — NVIDIA estimates up to a 100X improvement. When developers talk about being able to reduce install sizes, that comes directly from this technology. So, what’s the catch?

NVIDIA RTX IO is an absolutely phenomenal bit of technology, but it’s so new that nobody has it ready for primetime. As a result, I can only tell you that it’s coming and talk about how awesome this concept and technology is; I can’t test it for you. That said, as storage platforms shift towards incredibly high speed drives that unfortunately have very low storage capacity, you can bet we’ll see this come to life and quickly. Stay tuned on this — it could be a huge win for gamers everywhere.

NVIDIA Broadcast:

There is an added bonus that comes with the added power of the Tensor cores. While the AI cores do handle DLSS, they also deliver some additional smoothing in an app NVIDIA is calling “NVIDIA Broadcast”. This app can improve the output of your microphone, speakers, and camera through applying AI learning in much the same way that we see it in the examples above. Static or background noise in your audio, artifacting when you are streaming with a green screen (or without one as Broadcast can simply apply a virtual background), distracting noise from another person’s audio, and even perform a bit of head-tracking to keep you in frame if you are the type that moves around a lot. Not just a gaming-focused application, this works for any video conferencing, so feel free to Zoom away while we are all stuck inside.

Inside the application are three tabs – microphone, speakers, and camera. Microphone is supposed to let you remove background noise from your audio. I use a mechanical keyboard and I’ve been told more than once that it’s loud. Well, even with this enabled, it’s still loud — my Seiren Elite doesn’t miss a single sound.

The second tab is speakers which is supposed to reduce the amount of noise coming from other sources. I found this to be fairly effective, removing obnoxious typing noises from others — hopefully I can do the same for them one day.

The final tab is where the magic happens. Under the Camera tab you can blur your background, replace it with a video or image, simply delete the background, or “auto-frame”. Call software like Zoom and Skype can do this as well, but even in this early stage I can say it doesn’t do it this well. Better still, getting it pushed into OBS was as simple as selecting a device, selecting “Camera NVIDIA Broadcast” and it was done. It doesn’t get any easier than this.

The software has fully launched at this point, delivering a lot of value for the low price of free! In practice I saw a marginal (~2%) reduction in framerate when recording with OBS — a pittance when games like Overwatch and Death Stranding are punching above 150fps, and it works with fairly terrible lighting like I have in my office. A properly lit room will look miles better.

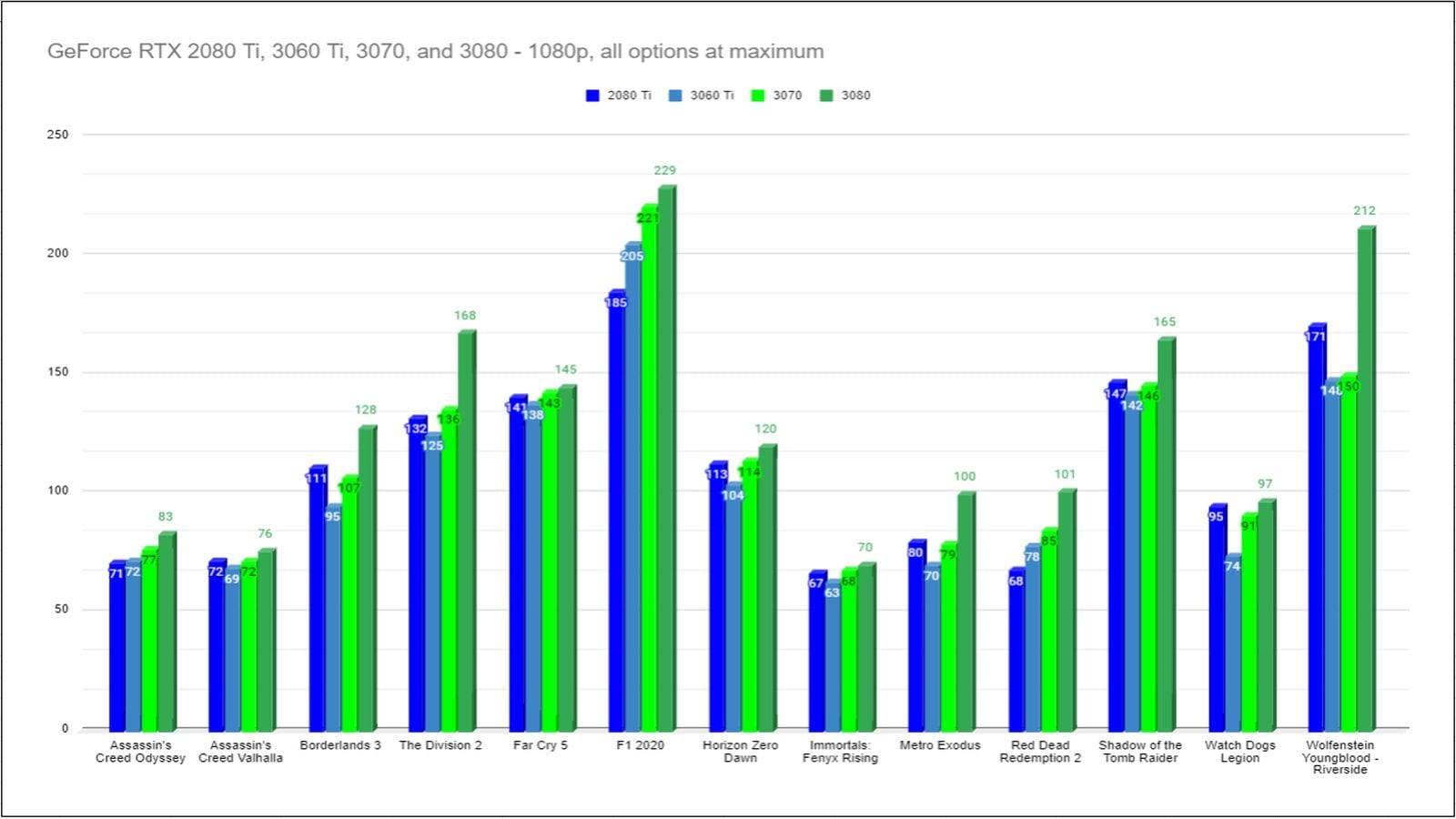

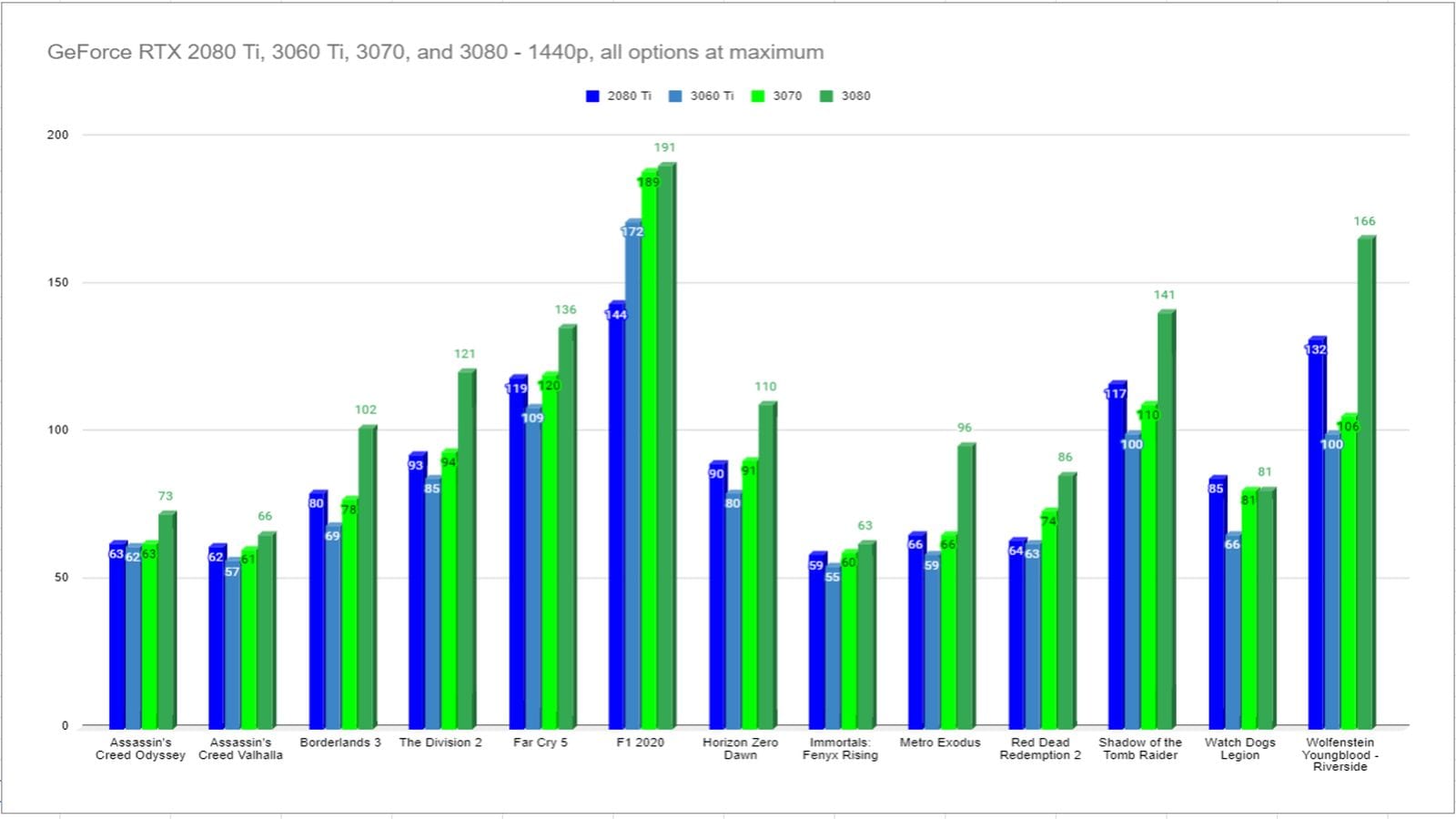

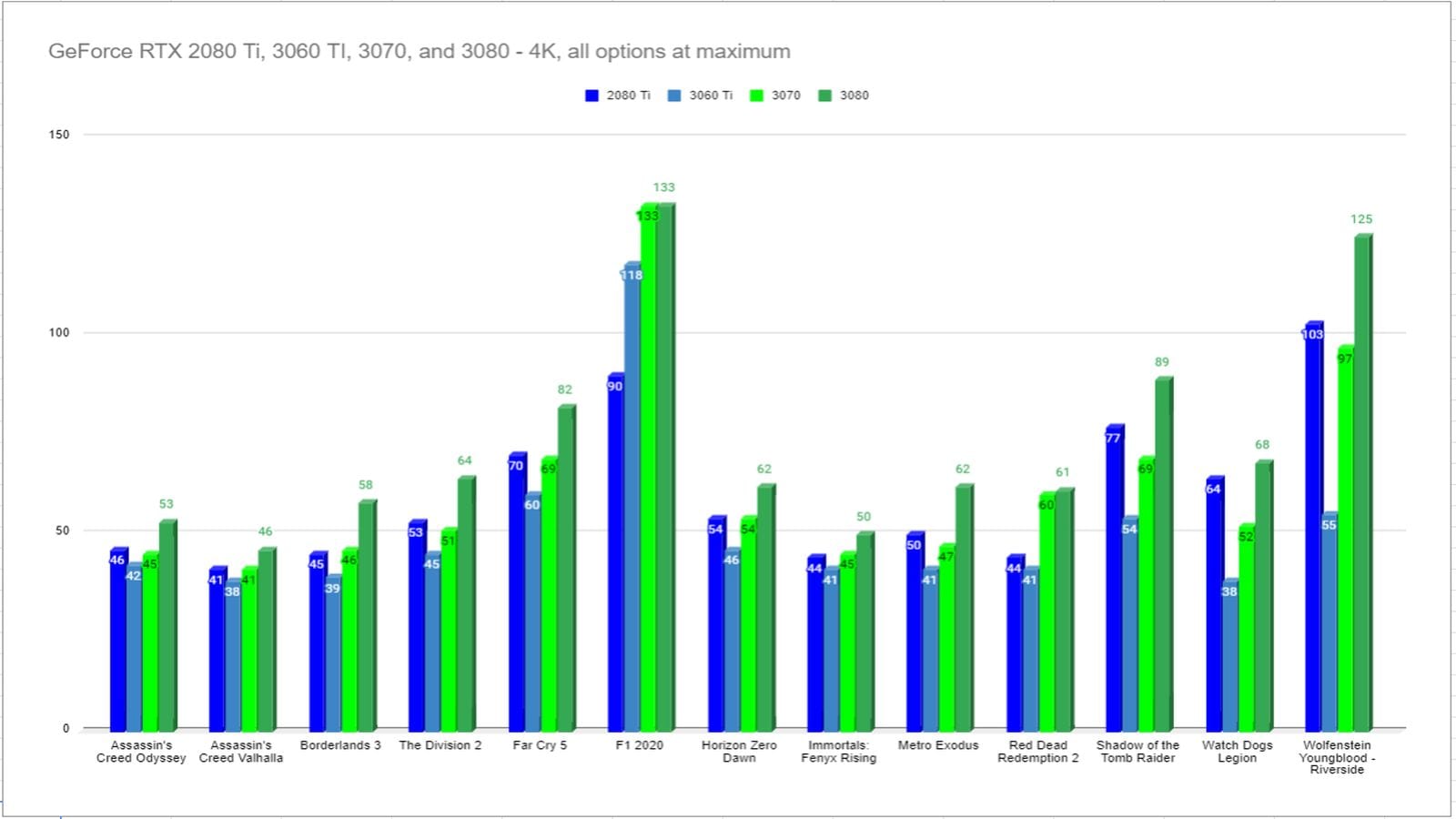

Gaming Benchmarks:

Drivers are beginning to mature, and games are getting better at utilizing RTX technology. We are seeing significant improvement in framerates and better performance overall. That said, when the prices are this competitive, it becomes even more important to include the entire lineup of cards to see where each falls in price and performance. As such, we are including the 3080, 3070, 3060 Ti, and 2080 Ti in our analysis. We will continue to upgrade this benchmark suite as games like Immortals: Fenyx Rising and Cyberpunk 2077 launch very shortly, and games like Ready or Not, Vampire: the Masquerade Bloodlines 2, The Medium, Bright Memory: Infinite, Dying Light 2, and many more on the immediate horizon.

ANALYSIS:

There are a lot of interesting bits of information to glean from these benchmarks. Sure the standouts are the big number jumps, but it also indicates something subtle as well. Games that take advantage of new lighting like RTX instead of performing complex calculations for lighting and shadows see a sizable boost over their contemporaries without that tech. By way of example, Assassin’s Creed Odyssey is notoriously CPU-bound, and since it runs on the Anvil engine, so is Assassin’s Creed: Valhalla, so throwing more hardware at it will only go so far. If the game were to take advantage of tech like RTX, we could see that constraint removed, likely granting huge gains.

I mentioned early on that the RTX 3060 Ti is squarely aimed at the 1440p market, and that’s more apparent in the benchmarks. 4K numbers take a noticeable dive, but not nearly as low as I anticipated. In fact, most games are in the mid 40s at that resolution, with RTX-enabled standouts being downright eyebrow raising nearly hitting that butter-smooth 60 mark.

Popping down to 1440p, we see the RTX 3060 Ti turn in some impressive numbers. Every game on our list exceeded, or was within 5 frames of 60fps, with games like Shadow of the Tomb Raider hitting 100fps, as did Wolfenstein: Youngblood and Far Cry 5.

It’s very clear that the RTX 3060 Ti is meant to replace cards like the RTX 2080, and anything below it. Based on previous benchmarks, it comes in around the power band of the 2070 Super, but it’s not exactly an apples to apples comparison due to the architectural changes in the Ampere-based cards. Bonus to that, of course, is that the card doesn’t cost $499. In fact, with only a few exceptions, it’s within striking distance of the painfully-priced 2080 Ti.

It’s important to note that many of the games on our current benchmark list are CPU-bound. The interchange between the CPU, memory, GPU, and storage medium create several bottlenecks that can interfere with your framerate. If your graphics card can ingest gigs of information every second, but your crummy mechanical hard drive struggles to hit 133 MB/s, you’ve got a problem. If you are using high-speed Gen3 or 4 m.2 SSD storage that drive can hit 3 or even 4 GB/s, but if your older processor isn’t capable of processing it, it’s not going to be able to fill your GPU either. Supposing you’ve got a shiny new 10th Gen Intel CPU, you may be surprised that it also may not be fast enough for what’s under the hood of this RTX 3070 or RTX 3080, and we see some of that phenomenon in the benchmarks above. That said, if you aren’t ready to upgrade your entire system, the RTX 3060 Ti might just extend the life of your existing rig. NVIDIA is once again ahead of the power curve on their highest end flagship cards, but the RTX 3060 Ti finds a sweet spot for that entry to mid-range systems.

COOLING AND NOISE:

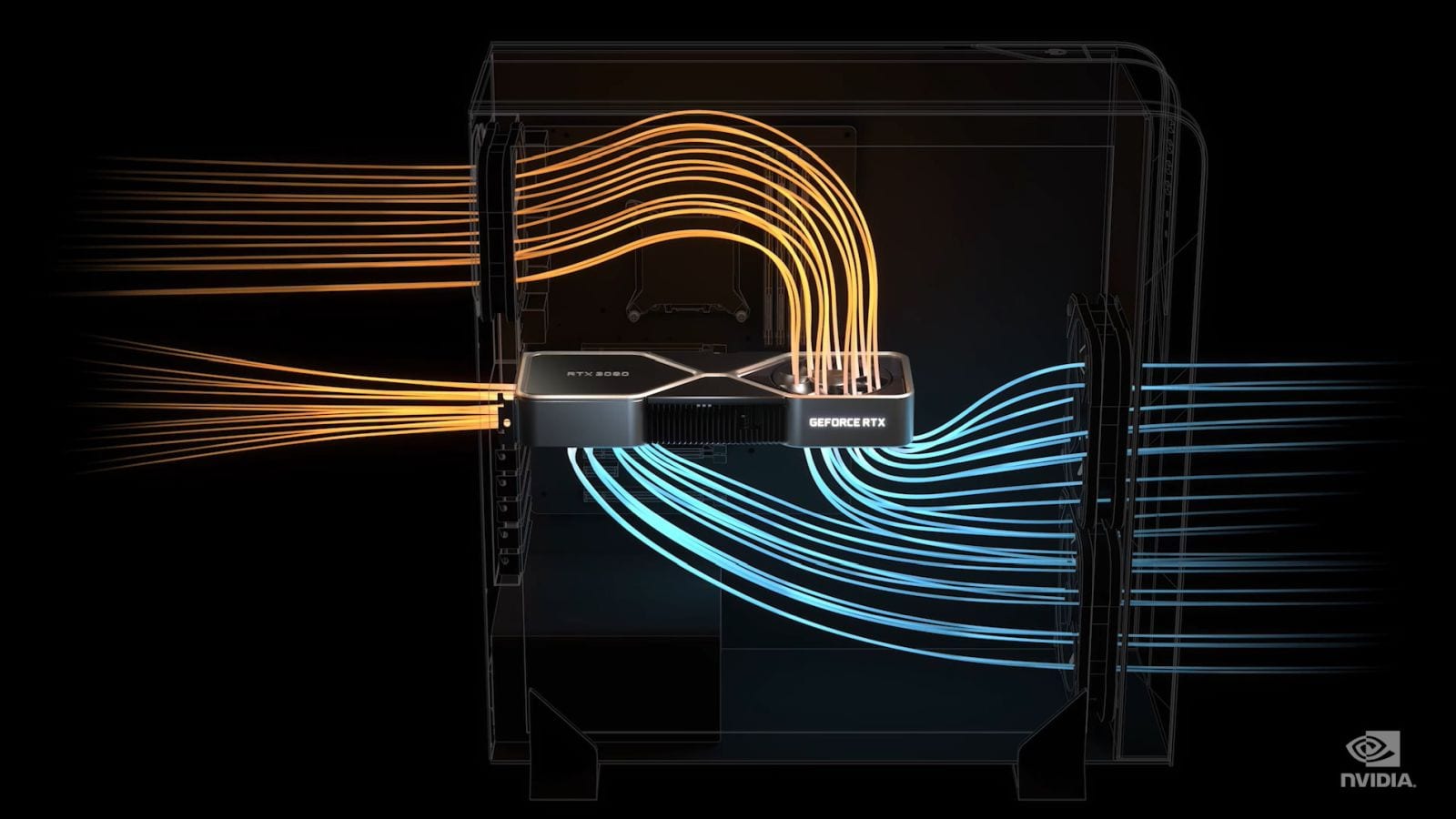

It’s one thing to deliver blisteringly fast frame rates and eye-popping resolutions, but if you do it while also blowing out my eardrums with a high pitched whine as your Harrier Jet-esque fans spin up to cool, we’ve got a problem. Thankfully NVIDIA realized this, as well as the need to cool a staggering 28 billion transistors (the 2080 Ti had 18.9 billion), and they redesigned the 30X0 series of cards to match. Like the 3080 and 3070, the 3060 Ti has a solid body construction with a new airflow system that displaces heat more efficiently, and somehow does it while being 10dB quieter than its predecessor. I’d normally point to that being a marketing claim, but I measured it myself.

Normal airflow through a case with a standard setup starts with air intake at the front, pushing it over the hard drives, and hopefully out the back. I can tell you that the case I picked up from Coolermaster was incorrectly assembled on arrival with the 200mm fan on top blowing air back into the case, so it’s always best to check the arrows on the side to make sure your case follows this path. The 2080 Ti’s design was that of a solid board that ran the length of the card with fans on the bottom pushing heat away. Unfortunately this requires that it circulates back into the path of the air flow, having to billow back up and then travel out the top and rear case fans.

The RTX 30X0 card’s airflow system is a thing of beauty. Since the card has been shrunk effectively in half thanks to the 8nm manufacturing process, this left a lot of real estate for a larger cooling block and fins, as well as a fan system to match. The fan on the top of the card (once it is mounted in the case) instead draws air up from the bottom, going through the hybrid vapor chamber heat pipe (as there is no longer a long circuit board to obstruct the airflow), and pushes it directly into the path of the normal case air path. The second fan, located on the bottom of the card and closest to the mounting bracket at the back of the case, draws air in as well, but instead of passing it through the card, it pushes the excess heat out of the rear of the card through a dedicated heat pipe vent. While we are using the 3080 to illustrate, and the case of 3070 and 3060 Ti are slightly smaller, the concept operates precisely the same:

You can see the results while running benchmarks. Unsurprisingly, the RTX 3060 Ti, just like the 3080 and 3070, never pushed above 79 C, no matter how hard I pushed it or at what resolution I ran it. In fact, it stayed in the low to mid 70s nearly all the time, and at an amazing 35 C when idle. More than once I’ve peeked into my case and saw that the fans for the card aren’t even spinning — this is one amazing piece of engineering.

Impossible Value

With the PlayStation 5 and Xbox Series X launched, there have been a non-stop barrage of advertisements around 4K gaming and how both platforms can deliver 120fps. What we’ve seen from the launch games is that very few games are capable of delivering on that promise when combined. Most games push the resolution down to 1080p to hit 120fps, though there are some absolutely gorgeous standouts like Ori and the Will of the Wisps that indeed hit that 4K/60 target. We’ll have to see what the future holds as to whether developers are able to deliver on that promise with more time with the new hardware. Why am I talking about consoles in a video card review? Well, they have a few things in common you should think about before you decide which card to purchase.

Like buying, for example, a PlayStation 5, you’ll want to think about the other components in your setup. Perhaps you have a TV that is capable of 4K, but can it handle 120Hz refresh rates, or has the manufacturer ticked this box by filling every other frame with a black frame so they can tick that box? Similarly, does your receiver support these resolutions and framerates, or will you be forced to skip your excellent surround sound system because your sound system would choke it back to 60Hz? The same applies to your PC. Yes, the 3060 Ti supports HDMI 2.1, and yes it can deliver up to 8K output, but you can see from the benchmarks that only the most optimized games are punching above 60 on this card. That said, with DLSS enabled, we are seeing incredible gains in games like Call of Duty: Black Ops Cold War, so perhaps 120+ is possible. Time will tell.

I am happy to report that, despite slimming the card for the entry market NVIDIA hasn’t cut down on the ports. The RTX 3060 Ti still includes a single HDMI 2.1 port and three DisplayPort 1.4a ports, just like the 3070, 3080, and even the mighty 3090. My complaint with all of those other cards is that the dedicated VR port is still gone, and at this point I’ll have to simply accept that as trading that port for VR framerates in the hundreds seems like a small price to pay.

PRICE TO PERFORMANCE:

There are no bones about it, cards like the Titan, the 2080 Ti, and the 3090 are expensive. They represent the approach of not worrying about whether they should and simply whether they could. I love that kind of crazy as it really pushes the envelope of technology and innovation. NVIDIA held an 81% market share for the GPU market last year, and they easily could have sat back and iterated on the 2080’s power and delivered a lower cost version with a few new bells and whistles attached. That’s not what they did. They owned the field and still came in with a brand new card that blew their own previous models out of the water. The GeForce RTX 3070 has more power than the 2080 Ti and it costs $500 versus the $1200+ you’ll fork out to get your hands on the previous generation’s king. Similarly, the RTX 3080 eclipses everything on the market, even their Titan RTX, and at $699 it does so in a fashion that beats it and takes its lunch money. The RTX 3060 Ti beats all of them in price to performance, coming in at an impressive launch price of just $399. We haven’t seen a generational leap like this, maybe ever. The fact that NVIDIA priced it the way they did makes me think they had a reason, and I don’t think that reason is AMD.

Sure, I’m certain the green team is worried about how the new generation of consoles could impact their market, but as someone who has worked in tech for a very long time, there can be another reason. When you go to a theme park there are signs that say “You must be this tall to ride this ride,” they are there for your safety. But safety is rarely fun or exciting. We’ve been supporting old technology like mechanical hard drives and outdated APIs for a very long time. Windows 10 came out five years ago, but there are still plenty of folks who want to use Windows 7. Not pushing the envelope stifles innovation, and it stops us from realizing the things we could achieve. By occasionally raising that “this tall” bar to introduce a new day and a new way, we send a message to consumers that it’s time to upgrade, and we send a strong signal to developers that they can push their own envelopes. That’s how we get games like Cyberpunk 2077, and it’s how we see lighting like we do in Watch Dogs: Legion. It’s what takes us from this, to this. There’s no better time to embrace the future than right now, and at a price to performance value that has seemed impossible, the RTX 3060 Ti continues to buck the pricing trends of the past. With power exceeding the GeForce RTX 2070 Super, a card that debuted at $699, and with power that gives a $1200 2080 Ti a run for its money, the RTX 3060 Ti redefines the term “entry level” with a much higher bar than anyone could have expected, and a steal at just $399.