I love technology, especially at the inflection point between generations. We see the outgoing generation of hardware being pushed as hard as physically possible as developers discover new ways to wring every bit of power out of it. We see games finally able to take advantage of the tech, pushing the envelopes of framerate, resolution, and visual fidelity. And then, somebody like NVIDIA comes along and blows it all up.

It’s fun.

The NVIDIA GeForce RTX 4090 is going to be a challenge to review. Not that the card is difficult to work with, or that I encountered any technological issues – no, it’s that you’ll have a hard time believing what I’m telling you. When a manufacturer makes a claim of double, triple, or even a 4x uplift with their newest hardware you have to raise an eyebrow in disbelief. Generational updates are usually marginal, with 30% uplifts being the norm. Sure, that’s significant, but doubling framerate sounds like a fantasy. Doing it at 4K resolution sounds like a technological impossibility, but that’s precisely what team green is claiming. Is that even remotely possible? Only one way to find out.

The RTX 4090 is a physically imposing device, but it’s not the construction brick that some folks have made it out to be. Yes, it’s going to be taking up additional space in your case, and it’s going to crowd the second slot, but let me ask – are you using that slot? If the answer is yes, you might have a challenge here, as the GPU takes 3 slots. That’s something that could be solved by moving to a vertical GPU mount, but I digress. I’m currently using a gorgeous be quiet! Dark Base 900 Pro v2. which has a shocking amount of space, but in a future segment, I’m going to try to downsize into a midsize case. Look for future coverage there, but that’s about the end of what I’ll have to say on the size of this card. Measure and make sure it’ll fit, and evaluate whether the extra size actually matters before you worry.

There are a number of important bits of tech that have gotten a massive upgrade with the 4090, so let’s go through a few of them.

Cuda Cores:

Back in 2007, NVIDIA introduced a parallel processing core unit called the CUDA, or Compute Unified Device Architecture. In the simplest of terms, these CUDA cores can process and stream graphics. The data is pushed through SMs, or Streaming Multiprocessors, which feed the CUDA cores in parallel through the cache memory. As the CUDA cores receive this mountain of data, they individually grab and process each instruction and divide it up amongst themselves. The RTX 2080 Ti, as powerful as it was, had 4,352 cores. The RTX 3080 Ti ups the ante with a whopping 10240 CUDA cores — just 200 shy of the behemoth 3090. The NVIDIA GeForce RTX 4090 ships with a whopping 16384 cores, but that doesn’t tell the whole story. We’ll circle back to this.

So…who is Ada Lovelace, and why do I keep hearing her name in reference to this card? Well, you may or may not know this already, but NVIDIA uses code names for their processors, naming them after famous scientists. Kepler, Turing, Tesla, Fermi, and Maxwell are just a few of them, with Ada Lovelace being the most current. These folks have delivered some of the biggest leaps in technology mankind has ever known, and NVIDIA recognizes their contributions. It’s a cool nod, and if that sends you down a scientific rabbit hole, then mission accomplished.

The RTX 4090 brings with it the next generation of tech for GPUs. We’ll get to Tensor and RT cores, but at its simplest, this next gen core is able to deliver faster and increased performance in three main areas – streaming multiprocessing, AI performance, and ray tracing. In fact, NVIDIA is stating that it can deliver double the performance across all three. I salivate at these kinds of claims, as they are very much things we can test and quantify.

What is a Tensor Core?

Here’s another example of “but why do I need it?” within GPU architecture – the Tensor Core. This technology from NVIDIA had seen wider use in high performance supercomputing and data centers before finally arriving on consumer-focused cards with the latter and more powerful 20X0 series cards. Now, with the RTX 40-series, we have the fourth generation of these processors. For frame of reference, the 2080 Ti had 240 second-gen Tensor cores, the 3080 Ti provided 320 compared, with the 3090 shipped with 328. This new 4090 ships with 544 Tensor Cores, but once again, this doesn’t tell you the whole story, and once again, I’m asking you to put a pin in that thought. So what do they do?

Put simply, Tensor cores are your deep learning / AI / Neural Net processors, and they are the power behind technologies like DLSS. The RTX 4090 brings with it DLSS 3, a generational leap over what we saw with the 3000 series cards, and is largely responsible for the massive framerate uplift claims that NVIDIA has made. We’ll be testing that to see how much of the improvements are a result of DLSS and how much are the raw power of the new hardware. This is important, as not every game supports DLSS, but that may be changing.

DLSS 3

One of the things DLSS 1 and 2 suffered from was adoption. Studios would have to go out of their way to train the neural network to import images and make decisions on what to do with the next frame. The results were fantastic, with 2.0 bringing cleaner images that could actually be better than the original source image. Still, adoption at the game level would be needed. Some companies really embraced it, and we got beautiful visuals from games like Metro: Exodus, Shadow of the Tomb Raider, Control, Deathloop, Ghostwire: Tokyo, Dying Light 2: Stay Human, Far Cry 6, and Cyberpunk 2077. Say what you will about the last game – visuals weren’t the problem. Still, without engine-level adoption, the growth would be slow. With DLSS 3, that’s precisely what they did.

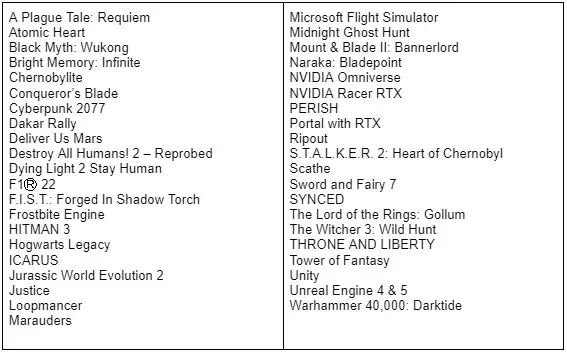

DLSS 3 is a completely exclusive feature of the 4090. Prior generations of cards will undoubtedly fall back to DLSS 2.0 as the advanced cores (namely the 4th-Gen Tensor Cores and the new Optical Flow Accelerator) that are contained exclusively on 4000-series cards are needed for this fresh installment in DLSS. While that may be a bummer to hear, there is light at the end of the tunnel – DLSS 3 is now supported at the engine level by both Epic’s Unreal Engine 4 and 5, as well as Unity, covering the vast majority of all games being released into the indefinite future. I don’t know what additional work has to be done by developers, but having it available at a deeper level should grease the skids. Here’s a quick list of what games support DLSS 3 already:

If you are unfamiliar with DLSS, it stands for Deep Learning Super Sampling, and it’s accomplished by what the name breakdown suggests. AI-driven deep learning computers will take a frame from a game, analyze it, and supersample (that is to say, raise the resolution) while sharpening and accelerating it. DLSS 1.0 and 2.0 relied on a technique where a frame is analyzed and the next frame is then projected, and the whole process continues to leap back and forth like this the entire time you are playing. DLSS 3 no longer needs these frames, instead using the new Optical Multi Frame Generation for the creation of entirely new frames. This means it is no longer just adding more pixels, but instead reconstructing portions of the scene to do it faster and cleaner.

A peek under the hood of DLSS 3 shows that the AI behind the technology is actually reconstructing ¾ of the first frame, the entirety of the second frame, and then ¾ of the third, and the entirety of the fourth, and so on. Using these four frames, alongside with data from the optical flow field from the Optical Flow Accelerator, allows DLSS 3 to predict the next frame based on where any objects in the scene are, as well as where they are going. This approach generates 7/8ths of a scene using only 1/8th of the pixels, and the predictive algorithm does so in a way that is completely undetectable to the human eye. Lights, shadows, particle effects, reflections, light bounce – all of it is calculated this way, resulting in the staggering improvements I’ll be showing you in the benchmarking section of this review.

Boost Clock:

The boost clock is hardly a new concept, going all the way back to the GeForce GTX 600 series, but it’s a very necessary part of wringing every frame out of your card. Essentially, the base clock is the “stock” running speed of your card that you can expect at any given time no matter the circumstances. The boost clock, on the other hand, allows the speed to be adjusted dynamically by the GPU, pushing beyond this base clock if additional power is available for use. The RTX 3080 Ti had a boost clock of 1.66GHz, with a handful of 3rd party cards sporting overclocked speeds in the 1.8GHz range. The RTX 4090 ships with a boost clock of 2.52GHz, with the idea of overclocking backed right in. That said, now’s a good time to talk about power.

Power:

The RTX 4090 is a hungry piece of tech, and you’ll need 100 more watts of power than the RTX 3090 to power it. The 4090 has a TDP of 450W, and NVIDIA is recommending a power supply of 850W. If you are using a PCIe Gen5 power supply (none were available at the time of writing, but I don’t know when you the reader will be reading this – I’m not your dad) you’ll have a dedicated adapter from your PSU, meaning you will only be using a single power lead. Fret not if you have a Gen 4 PSU, however, you can simply use the included adapter. A four tailed whip, you can connect three 6+2 PCIe cables if you just want to power the card, but if you connect a fourth, you’ll provide the sensing and control needed for overclocking. Provided the same headroom as its predecessor exists, there should be room for that overclocking, but that’s beyond the scope of this review. Undoubtedly there will be numerous people out there who feel the need to push this behemoth beyond its natural limits. My suggestion to you, dear reader, is that you check out the benchmarks in this review first. I think you’ll find that overclocking isn’t something you’ll need for a very, very long time.

Memory:

The RTX 3080, 3080 Ti, 3090, and 3090 Ti all used GDDR6X, and that’s providing the most possible memory bandwidth thanks to its vastly-expanded memory pipeline. The RTX 3090 Ti sported 24GB of memory, with a 384-bits wide memory lane. It allows for more instructions to be sent through the pipeline than traditional GDDR6 you’d find in a 3070 Ti, which is 256-bits wide. The RTX 4090 uses that same pipeline width and the same 24GB of GDDR6X, with the capability of pushing over 21.2GB/s through that pipeline. In this case, don’t fix it if it’s not broken – no upgrade was needed here.

Shader Execution Reordering:

One of the new bits of tech that is exclusive to the DLSS 3 pipeline is Shader Execution Reordering. If you are running a 4000 series card, you’ll be able to process shaders more effectively as they can be re-ordered and sequenced. Right now, shader objects (these calculate light and shadow values, as well as color gradations) are processed in the order received, meaning you are doing a lot of tasks out of order from when they’ll be consumed by the engine. It works, but it’s hardly efficient. With Shader Execution Reordering, these can be re-organized into a sequence that delivers them with other similar workloads. This has a net effect of up to 25% improvement in framerates and up to 3X improvement in ray tracing operations – something you’ll see in our benchmarks later on in this review.

What is an RT Core?

Arguably one of the most misunderstood aspects of the RTX series is the RT core. This core is a dedicated pipeline to the streaming multiprocessor (SM) where light rays and triangle intersections are calculated. Put simply, it’s the math unit that makes realtime lighting work and look its best. Multiple SMs and RT cores work together to interleave the instructions, processing them concurrently, allowing the processing of a multitude of light sources that intersect with the objects in the environment in multiple ways, all at the same time. In practical terms, it means a team of graphical artists and designers don’t have to “hand-place” lighting and shadows, and then adjust the scene based on light intersection and intensity. With RTX, they can simply place the light source in the scene and let the card do the work. I’m oversimplifying it, for sure, but that’s the general idea.

The RT core is the engine behind your realtime lighting processing. When you hear about “shader processing power”, this is what they are talking about. Again, and for comparison, the RTX 3080 Ti was capable of 34.10 TFLOPS of light and shadow processing, with the 3090 putting in 35.58. The RTX 4090? 82.58 TFLOPS. That’s not double, that’s double with a scoop of whipped cream and a cherry on top. All of those articles that question whether or not you should spend your precious GPU compute power on RTX just got their answer – you’d be dumb not to, as the 4090 has processing power to spare.

The Turing architecture cards (the 20X0 series) were the first implementations of this dedicated RT core technology. The 2080 Ti had 72 RT cores, delivering 26.9 Teraflops of throughput, whereas the RTX 3080 Ti pushes this to 80 RT cores – just shy of the 82 cores on the RTX 3090. The RTX 4090 features 128 RT Cores, but like the Tensor and Cuda cores, these are next-generation cores. So let’s talk about what all of these new cores combined are delivering.

Benchmarks:

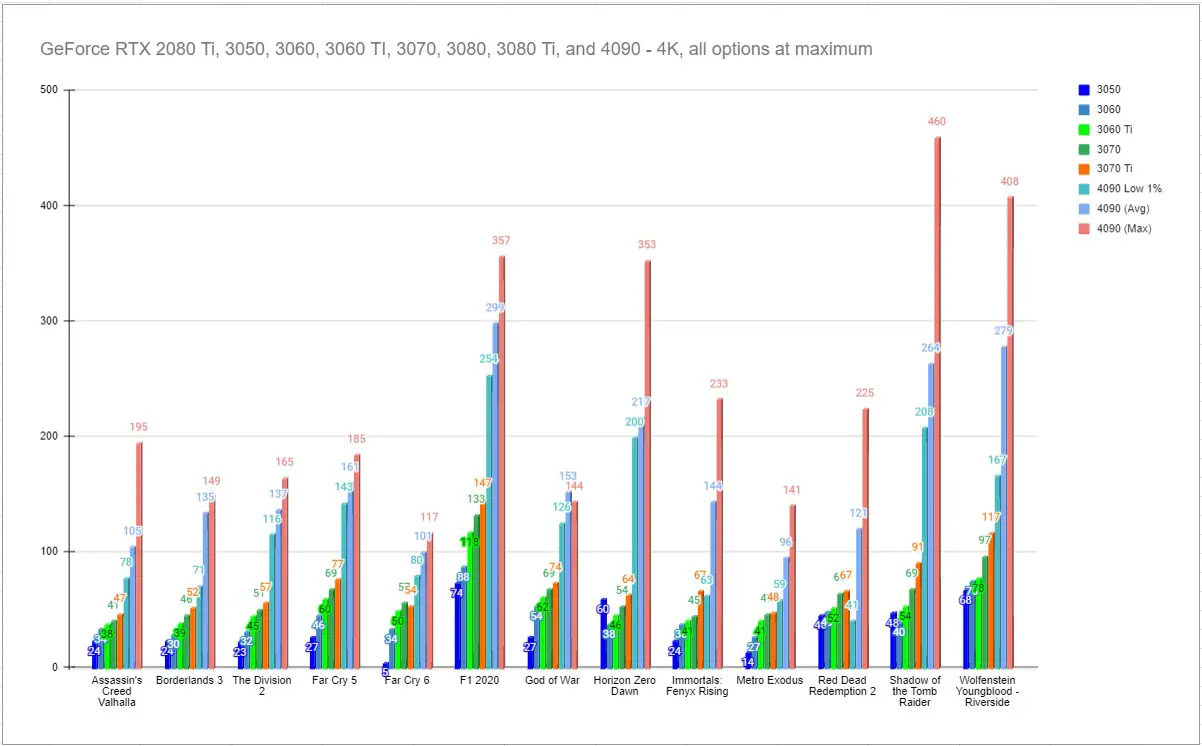

We are going to take a slightly different approach to our benchmarks this time around, and when you look at this first graph, you’ll understand why. This card is so intensely powerful that measuring it at anything less than 4K is simply a waste of time. It also extends the scale of the graph so ridiculously that there’s no point in comparing it with anything other than the highest end of the previous generation. I’ve dubbed this the “ridiculous” graph – enjoy!

Games like Wolfenstein, Rise of the Tomb Raider, and Metro: Exodus were some of the earliest adopters of Deep Learning Super Sampling, and we see the longer maturity window’s effect represented here. Since the scale is so mangled, let’s just point at the big spires before we get to a more readable graph.

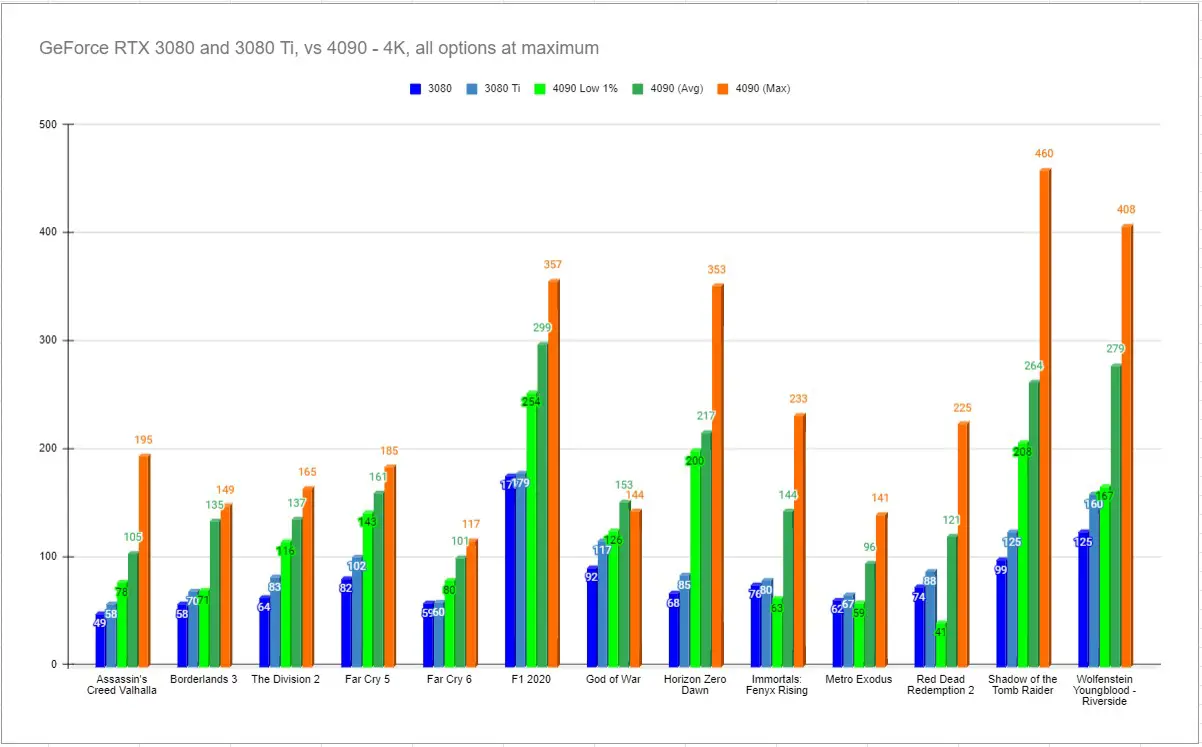

Joking aside, we pulled in the RTX 3080 and 3080 Ti to showcase our previous benchmarking suite against the 4090. These two flagship cards from the previous generation can at least run in the same race, even if they stand absolutely no chance whatsoever. These are the average numbers, but we’ll dig into the question of how much of an effect DLSS 3 has in a moment.

Now that you can see a bit more of the detail, one thing becomes insanely clear. If you were to combine the power of the RTX 3080 and the power of the RTX 3080 Ti, you’d still be a little short of what the RTX 4090 delivers. Let me say that again – two ENTIRE RTX 3080s to reach the power of this one card. We see this play out nicely in games like Horizon Zero Dawn, Immortals Phoenix Rising, and F1 2020. Again, all of these were run with all settings maxed, and at 4K resolution. Absolutely staggering.

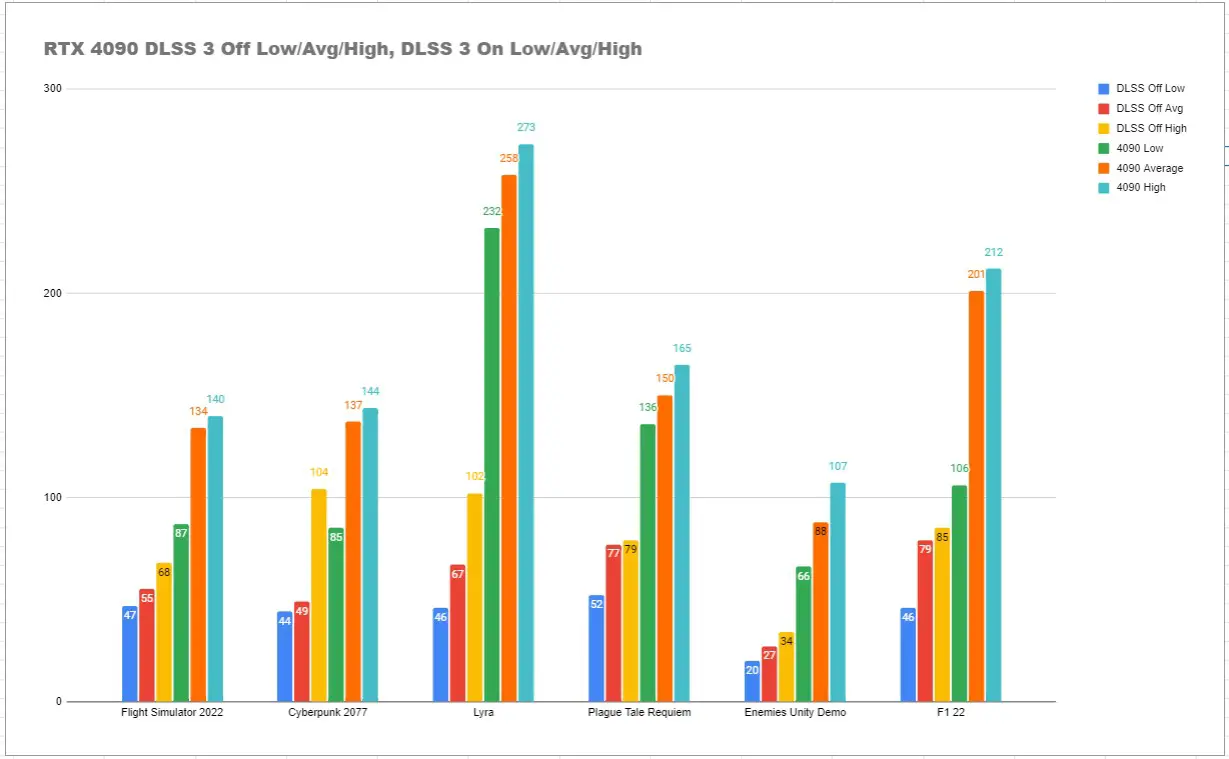

I wanted to introduce a new class of modern titles that would push this new generation of cards as hard as possible, though as you’ll see below, it hardly even does that. Still,these are the most demanding titles, and we’ll be adding as we go. I also wanted to showcase how much of a difference DLSS 3 makes over just raw power. To that end, we are bringing Flight Simulator 2022, Cyberpunk 2077, A Plague Tale: Requiem, F122, and the Unity demo of Enemies, as well as the Lyra Unreal Engine 5 demo product. These were recorded with all settings at maximum, all RTX options enabled, Reflex Boost turned on, and at 4K resolution. Once again I found myself flabbergasted at the results. I ran these multiple times, and confirmed them with NVIDIA, as I simply could not believe the increases. Observe:

If you bring up YouTube and look up DLSS, you’ll find entirely too many channels asking “Is DLSS worth it?” with red arrows and surprised or scrunched faces. Well, just take a second and look at this graph again. Why in the wide world of sports would you NOT enable DLSS 3? You could say you are leaving frames on the table, but honestly it’s worse than that — if you don’t enable DLSS 3, you are leaving literally hundreds of frames behind at zero additional cost. Just turn it on already!

Sound:

I could pull out the sound measurement devices, and with a card this big you’d think I’d need to, but NVIDIA has completely redesigned the vapor chamber / passthrough structure of the RTX 4090. It’s an imposing card, so you’d expect it to be downright loud, but…my case fans are louder, even at load. At idle, I don’t hear it at all. Yes, I’m using a be quiet! case, but it’s not magic – the card is just that quiet. My case sits about 2 feet away from where I sit, so I should be hearing this thing, but somehow I just don’t.

Price:

This is going to come up in every discussion, so you knew this was coming. The MSRP for the GeForce RTX 4090 is $1599. Chip manufacturer TSMC has increased pricing across the board, and since they provide chips to AMD, Intel, NVIDIA, and more, it’s very likely that what we are seeing with this price is the tip of the proverbial iceberg. I don’t want to make value judgments on your behalf, but I will at least acknowledge that this is a premium price. What I hope is at least abundantly clear is just how much that price will get you.

I mentioned that I love the boundaries between generations, and as we reach the end of this review, I’ll tell you more about why. Yes, there’s lots of oohs and aahs for new and shiny gear, but what these moments represent is the start of the improvements. If you read this review at launch, DLSS 3 is exactly 1 day old. As developers continue to work on fresh ways to take advantage of it, and as drivers continue to improve, we’ll see big jumps in performance. This same set of tests a year from now are very likely to yield very different results, and to the positive. Looking at the RTX 3080 Ti’s scores at launch versus today, you’ll see upwards of 30-40% increase in framerates on a great many titles, and possibly more if they had fully embraced DLSS 2.0. Now, we are looking at the start of the 4000 series hardware, and a new generation of AI improvements in DLSS 3. The absolutely insane readings we have pulled today are just the beginning. And what a glorious beginning it is.