We recently reviewed the GeForce RTX 4070 Super, and we came away impressed with the pricing and the performance. Launching at $599, it offered up between 13 and 15% power improvement over the 4070, and ducked just under the 4070 Ti. Now we get our first look at the RTX 4070 Ti Super. While much of this review (and likely the same for the upcoming 4080 Super) will be the same, we’ll hit the specs, as well as our usual suite of benchmarks. We’ve recently put the GeForce RTX 4070 Super through its paces and came away impressed with the card. Now it’s time to see where the RTX 4070 Ti Super lands in the stack. Off we go!

The RTX 4070 Ti Super I’m reviewing is from Asus. It moves away from the passthru fin design of the Founders Edition from NVIDIA, instead replacing the one larger fan with three smaller ones. The results are noisier than you might like when in performance mode, running the fans constantly, ramping up during heavier loads. With the physical toggle on the card switched to quiet mode the fans run at what appears to be a slower speed, though neither mode shuts down entirely when idle like NVIDIA’s reference design. This is another example where team green’s efficient cooling design wildly exceeds anything the partner channels can bring to the table.

The biggest comparison folks are likely to make is the approximate delta between the RTX 4070 Ti (our review here) and the 4070 Ti Super, though it’s equally valid to look at the 4070 Super (our review) as well. As such, we’ll be doing precisely that, comparing these three cards, as well as a quick glance back to the 3080 Ti for rasterized DLSS 1 and 2 performance. It’s important to note that reviews are a point in time, and driver maturity and game patches are likely to push numbers higher. As such, focus less on the number than the delta between them. Or don’t – I’m not your dad.

In our early DLSS 3 reviews we focused exclusively on DLSS 2, DLSS 3, and rasterized performance. Now we have a fresh contender in the space – DLSS 3.5 and Frame Generation. All 4000 series cards are capable, and more games are taking full advantage. Moreover, we are seeing the minor artifacting issues becoming less frequent and thankfully less severe. While I’ve long been a proponent of the tech, recent advances are breathing a great deal of life into resolutions and framerate levels otherwise impossible to achieve. That said, the RTX 4070 Ti Super, like the original Ti and now the 4070 Super, is aimed at 1440p, so that’s where we’ll focus our efforts. That said, the additional VRAM and horsepower easily allows this card to handle 4K in most, and probably all, cases. Let’s look at the testing rig:

The test rig:

Gigabyte Z790 AORUS MASTER

Intel 13900K CPU

32GB DDR5 @ 7300 MT/s

M.2 NVMe @ 7700 GB/s

Asus GeForce RTX 4070 Ti Super triple-fan variant at stock speeds

A general complaint with the mid-tier cards is that some are well-equipped with VRAM, and others are lacking a bit. VRAM usage is necessary for a great deal of features and functions, and none more so than higher resolutions. Quality settings will push the utilization further, and running out of VRAM will create all sorts of bottlenecks, as your system will then switch to system RAM, or worse, write to disk to fill the shortfall. If a swap file is needed, it also means a trip to your local storage method (NVMe, hopefully), further slowing things. So how much VRAM do we really need?

The RTX 3080, the current go-to card according to the Steam Hardware report for December 2023, was a powerful card, but 10GB of RAM made the Ti version that much more attractive as it had another 2GB of VRAM headroom. The 4000 series of cards have been more generous with VRAM, with the 4090 having 24 GB of GDDR6X memory, the 4080 shipping with 16GB, and the 4070 Ti coming with 12GB. NVIDIA resisted the temptation to cut back memory on the Super, instead keeping the same 12GB of GDDR6X RAM, and the same 192-bit bus width. The RTX 4070 Ti Super pushes into interesting territory with 16GB of GDDR6X. Coupled with a 256-bit bus width, we are now raising eyebrows as it bumps into the 4080 territory. It replaces the original 4070 entirely, but with enough power to all but supplant the 4080.

While we don’t lean on synthetic benchmarks as they aren’t a great way to tell you how games will perform. That said, the RTX 4070 Ti Super tends to be around 8-10% faster than the RTX 4070 Ti at 4K resolution, thanks to the additional bus width and RAM headroom. At 1440p we see a lead over the 4070 Ti, but not quite as wide – around 6-7% in tests like Cinebench and Blender. More analysis will be needed with a wider array of games, but the game-tied benchmarks Let’s dig a little deeper under the hood to understand not only the numbers, but what these components do.

Cuda Cores:

Back in 2007, NVIDIA introduced a parallel processing core unit called the CUDA, or Compute Unified Device Architecture. In the simplest of terms, these CUDA cores can process and stream graphics. The data is pushed through SMs, or Streaming Multiprocessors, which feed the CUDA cores in parallel through the cache memory. As the CUDA cores receive this mountain of data, they individually grab and process each instruction and divide it up amongst themselves. The RTX 2080 Ti, as powerful as it was, had 4,352 cores. The RTX 3080 Ti ups the ante with a whopping 10,240 CUDA cores — just 200 shy of the behemoth 3090. The NVIDIA GeForce RTX 4090 ships with a whopping 16,384 cores. The GeForce RTX 4070 had 5,888 CUDA cores, and the 4070 Ti had 7680. The GeForce RTX 4070 Super ships with 7168 CUDA cores, placing it well above the 4070, and just below the RTX 4070 Ti. Unsurprisingly, given that it’s based on the AD103 architecture instead of the AD104, the RTX 4070 Ti Super pushes up to 8448 current-gen CUDA cores, just under the 9728 contained in the RTX 4080. These numbers don’t quite tell the whole story. We’ll circle back to this.

Since the launch of the 4000 series of cards we’ve seen time and again that these newest iterations of technologies are resulting in a staggering framerate improvement when combined with the latest DLSS technology. In some cases, NVIDIA is suggesting the framerate can double from the previous gen, and we saw that very thing in our previous reviews. Arguably the RTX 4070 Ti Super has exited the mid-tier range of cards and is edging the bottom of the top of the food chain. As such there is enough horsepower to push well past just 60fps at current games, but easily into the triple digits for rasterized gaming at 1440p or 4K. Throw Frame Generation into the mix and you’ve got a powerhouse.

What is a Tensor Core?

Here’s another example of “but why do I need it?” within GPU architecture – the Tensor Core. This technology from NVIDIA had seen wider use in high performance supercomputing and data centers before finally arriving on consumer-focused cards with the latter and more powerful 2000 series cards. Now, with the RTX 40-series, we have the fourth generation of these processors. For frame of reference, the 2080 Ti had 240 second-gen Tensor cores, the 3080 Ti provided 320, with the 3090 shipping with 328. The RTX 4090 shipped with 544 Tensor Cores, the RTX 4080 has 304, the RTX 4070 has 184, the Ti variant has 240. The RTX 4070 Super recently shipped 224, and now the RTX 4070 Ti Super ships with 264. Given the complexity and advancements inside the new generation of Tensor Cores, you can’t quite do a 1:1 number battle with the previous core count. Put a pin in that thought and let’s look at what a Tensor core does.

Put simply, Tensor cores are your deep learning / AI / Neural Net processors, and they are the power behind technologies like DLSS. The RTX 4000 series of cards brings with it DLSS 3 (and now 3.5!) – a generational leap over what we saw with the 3000 series cards, and is largely responsible for the massive framerate uplift claims that NVIDIA has made. We’ve seen time and again the improvements DLSS 3 and 3.5 can make in current games, as well as Frame Generation if a user enables it and the game supports it, and that adoption is ramping quickly. That said, not every game supports DLSS 3.0 or 3.5, but that’s changing rapidly.

DLSS 3, 3.5, and beyond

One of the things DLSS 1 and 2 suffered from was adoption. Studios would have to go out of their way to train the neural network to import images and make decisions on what to do with the next frame. The results were fantastic, with 2.0 bringing cleaner images that could actually be better than the original source image. Still, adoption at the game level would be needed. Some companies really embraced it, and we got beautiful visuals from games like Metro: Exodus, Shadow of the Tomb Raider, Control, Deathloop, Ghostwire: Tokyo, Dying Light 2: Stay Human, Far Cry 6, and Cyberpunk 2077. Say what you will about the last game – visuals weren’t the problem. Still, without engine-level adoption, the growth would be slow. With DLSS 3, that’s precisely what they did.

DLSS 3 is a completely exclusive feature of the 4000 series cards. Prior generations of cards will undoubtedly fall back to DLSS 2.0 as the advanced cores (namely the 4th-Gen Tensor Cores and the new Optical Flow Accelerator) that are contained exclusively on 4000-series cards are needed for this fresh installment in DLSS. While that may be a bummer to hear, there is light at the end of the tunnel – DLSS 3 is now supported at the engine level by both Epic’s Unreal Engines 4 and 5, as well as Unity, covering the vast majority of all games being released in the indefinite future. I don’t know what additional work has to be done by developers, but having it available at a deeper level has greased the skids.

To date there are over 500 games that utilize DLSS 2.0, and just over 100 games already supporting DLSS 3. Another 30 or so have been announced as having support coming shortly or at shipping time, including Diablo IV, Dragon’s Dogma 2, Skull and Bones, Vampire: The Masquerade – Bloodlines 2, STALKER 2: Heart of Chornobyl, Atomic Heart, Dying: 1983 and more. Additionally, EA has recently begun supporting DLSS in their Frostbite Engine. The Madden series, Battlefield series, Need for Speed Unbound, Dead Space series, etc. will all see benefit with native support. Unity now supports DLSS, and that means improvement for games like Project Ferocious, Low-Fi, Singularity, Faded, Sand, Replaced, Hollow Cocoon, The Quinfall, etc. all see improvement. Unreal Engine 4 and 5 means support for the next Witcher game, Chrono Odyssey, Banishers: Ghosts of New Eden, Nightingale, Indika, Tekken 8, Palworld, Outcast: A New Beginning, and hundreds of other unannounced games for all of these engines can take advantage. While DLSS has had a slow adoption in the past, it’s practically a vertical climb for future support.

If you are unfamiliar with DLSS, it stands for Deep Learning Super Sampling, and it’s accomplished by what the name breakdown suggests. AI-driven deep learning computers will take a frame from a game, analyze it, and supersample (that is to say, raise the resolution) while sharpening and accelerating it. DLSS 1.0 and 2.0 relied on a technique where a frame is analyzed and the next frame is then projected, and the whole process continues to leap back and forth like this the entire time you are playing. DLSS 3 no longer needs these full frames, instead using the new Optical Multi Frame Generation for the creation of entirely new frames by using just a fraction of the original. This means it is no longer just adding more pixels, but instead constructing whole new additional AI frames in between the original frames to do it faster and cleaner.

A peek under the hood of DLSS 3 shows that the AI behind the technology is actually reconstructing ¾ of the first frame, the entirety of the second frame, and then ¾ of the third, and the entirety of the fourth, and so on. Using these four frames, alongside data from the optical flow field from the Optical Flow Accelerator, allows DLSS 3 to predict the next frame based on where any objects in the scene are, as well as where they are going. This approach generates 7/8ths of a scene using only 1/8th of the pixels, and the predictive algorithm does so in a way that is almost undetectable to the human eye. Lights, shadows, particle effects, reflections, light bounce – all of it is calculated this way, resulting in the staggering improvements I’ll be showing you in the benchmarking section of this review.

The launch of DLSS 1, 2, and 3 all carried some oddities with image quality. Odd artifacting and ghosting, shimmering around the edges, shimmering around UI elements, and other visual hiccups hit some games harder than others. More recent patches have fixed much of the visual issues, though flickering UI elements can still appear when frame generation is enabled. This mostly occurs when there is a text box or UI element that is in motion, such as a tag above a racer’s head, or text subtitles above a character. I saw this fairly extensively in Atomic Heart, though it was fixed in Cyberpunk 2077 – your mileage may vary, clearly, but it’s getting quite a bit better. Still, when you go down a high-speed elevator in Star Wars: Jedi Survivor you’ll likely see an odd trailing shadow above your character’s head. Thankfully this is becoming less and less of an issue through game patches and driver updates, though most of this relies on the developer more than NVIDIA – you gotta patch your games, folks.

There is a more recent development that I’ve seen some developers use for games that came out before the launch of the 4000 cards – hybrid DLSS. You may see DLSS 2.0 options, but with DLSS 3 Frame Generation as a toggle. Hogwarts Legacy and Hitman III come to mind, as both of them utilize both of those DLSS technologies in tandem to provide a cleaner experience. If you want to get into a bit of tinkering, you can occasionally fix issues by replacing the .dll for DLSS, though this should fall to the developer, not gamers, to fix. As I mentioned, your mileage may vary, but you’ll probably be too busy enjoying having a stupidly high framerate and resolution to notice.

Boost Clock:

The boost clock is hardly a new concept, going all the way back to the GeForce GTX 600 series, but it’s a necessary part of wringing every frame out of your card. Essentially, the base clock is the “stock” running speed of your card that you can expect at any given time no matter the circumstances. The boost clock, on the other hand, allows the speed to be adjusted dynamically by the GPU, pushing beyond this base clock if additional power is available for use. The RTX 3080 Ti had a boost clock of 1.66GHz, with a handful of 3rd party cards sporting overclocked speeds in the 1.8GHz range. The RTX 4090 ships with a boost clock of 2.52GHz, and the 4080 was not far behind it at 2.4GHz. The RTX 4070 has a boost clock of 2.475GHz, with the Ti version coming in at 2.61GHz. The 4070 Super adopted the 2.475GHz clock speed of the regular 4070 instead of splitting the difference as we see in other areas. The RTX 4070 Ti Super hits the gas pedal with a boost clock of 2.61GHz, pushing it slightly above the vaunted RTX 4090.

What is an RT Core?

Arguably one of the most misunderstood aspects of the RTX series is the RT core. This core is a dedicated pipeline to the streaming multiprocessor (SM) where light rays and triangle intersections are calculated. Put simply, it’s the math unit that makes realtime lighting work and look its best. Multiple SMs and RT cores work together to interleave the instructions, processing them concurrently, allowing the processing of a multitude of light sources that intersect with the objects in the environment in multiple ways, all at the same time. In practical terms, it means a team of graphical artists and designers don’t have to “hand-place” lighting and shadows, and then adjust the scene based on light intersection and intensity. With RTX, they can simply place the light source in the scene and let the card do the work. I’m oversimplifying it, for sure, but that’s the general idea.

The RT core is the engine behind your realtime lighting processing. When you hear about “shader processing power”, this is what they are talking about. Again, and for comparison, the RTX 3080 Ti was capable of 34.10 TFLOPS of light and shadow processing, with the 3090 putting in 35.58. The RTX 4090 supplies 82.58 TFLOPS. The 4080 brought 64 TFLOPS, with the 4070 reporting in at 46 RT cores providing 29 TFLOPS of FP32 shading power. The RTX 4070 Ti gave us 60 RT cores, with the 4070 Super only slightly lower at 56 RT cores and 35.48 of TFLOPs of power. The RTX 4070 Ti Super bumps this up to 60 RT cores to deliver 44.1 TFLOPs of shading power. Across all of the 4000 series, if you look at the numbers, you’d think that’d put it just above the RTX 3090, but the generational gap between these two shading technologies is night and day (and could properly shade the night and day while it’s at it!), so it’s not a 1:1 correlation.

Benchmarks:

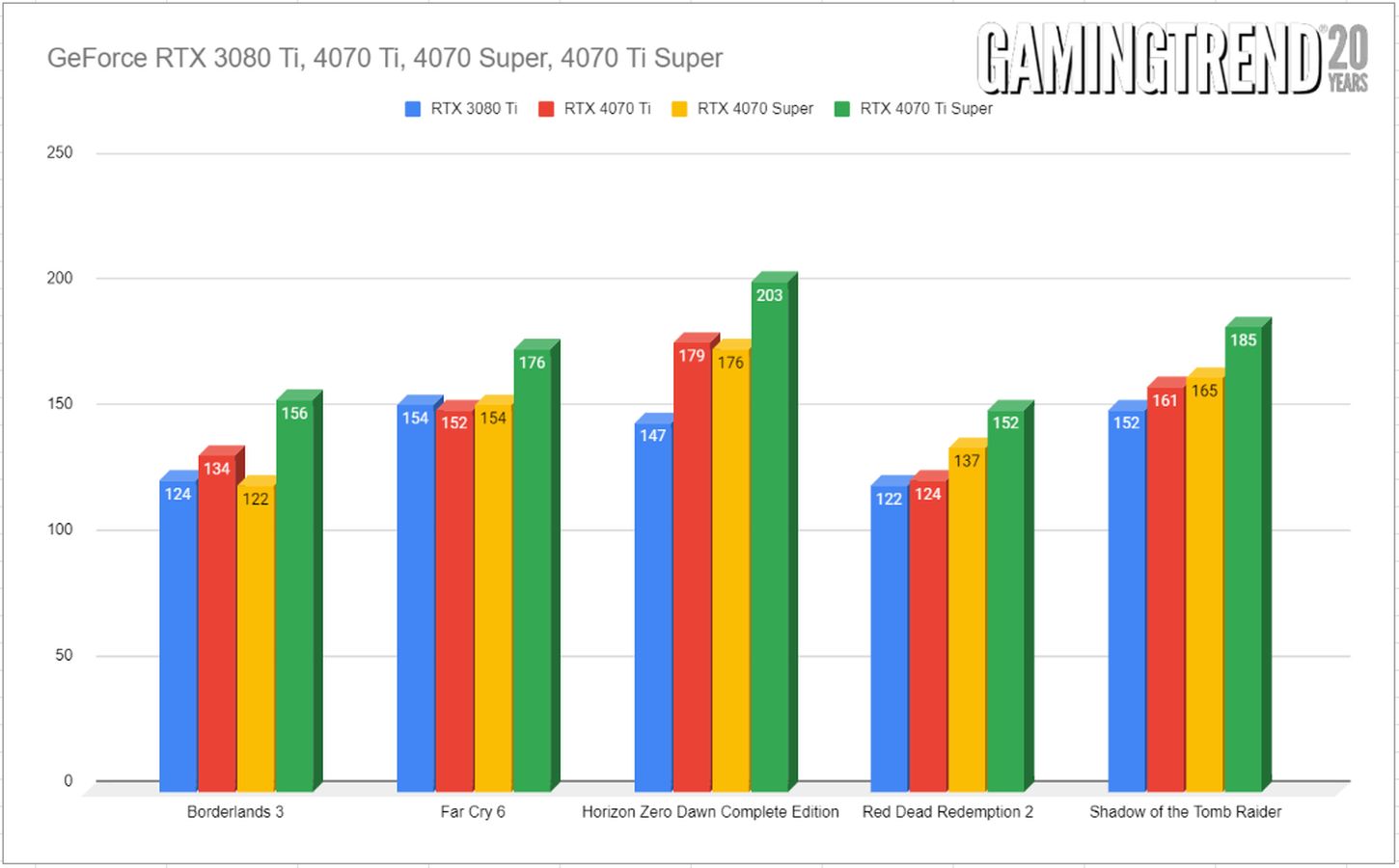

Starting off with previous generation games without DLSS 3, we see a reasonable amount of improvement in some games and a drastic improvement in others. The improvements are about what you’d expect for Ray-Traced titles, but it also performs exceedingly well in older titles. Since so many readers are still using an RTX 3080, let’s compare the consumer flagship of last generation to this midrange card from this generation, and arguably a new member of the top tier. Let’s take a look at DLSS enabled titles first.

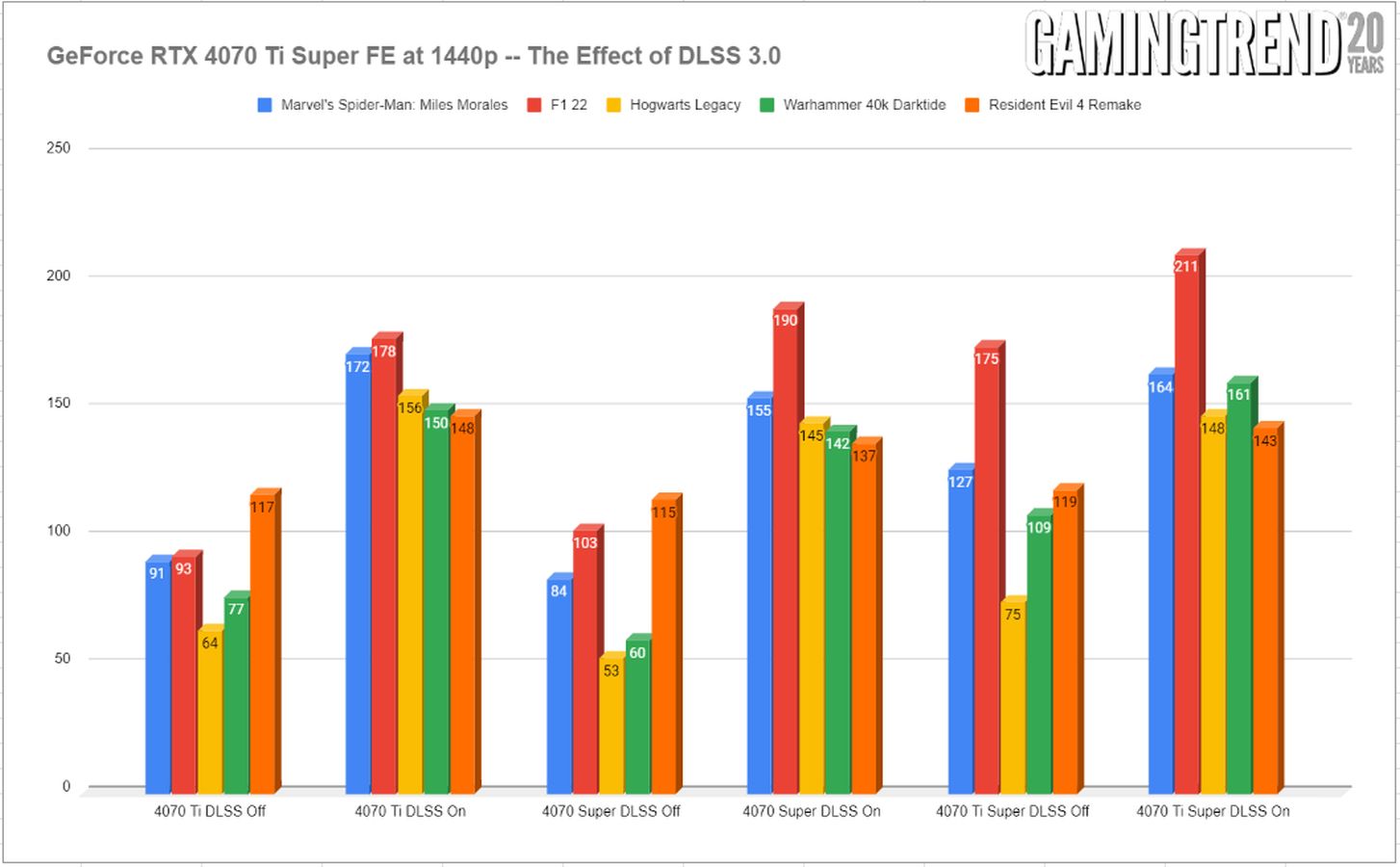

A more broad look at current titles reveals that the RTX 4070 Ti Super is more than a simple bump from the non-super variant, or even the non-Ti version. In most cases we are seeing more then 20fps average increase over previous 4000 cards. It’s also immediately clear that the effect of DLSS 3 is dramatic, and in so many cases, the card goes from good to great. Obviously some games benefit more than others, but it’s clear that DLSS 3 is the difference between a smooth experience and framerates. At 1440p there’s enough horsepower to go around, giving the player more of a choice on how aggressive they might choose to get with the DLSS slider, or turning it off entirely if they wish. In fact, there’s likely more than enough extra horsepower to have your cake and eat it too, hitting 4K and 60+ fps without too much stress.

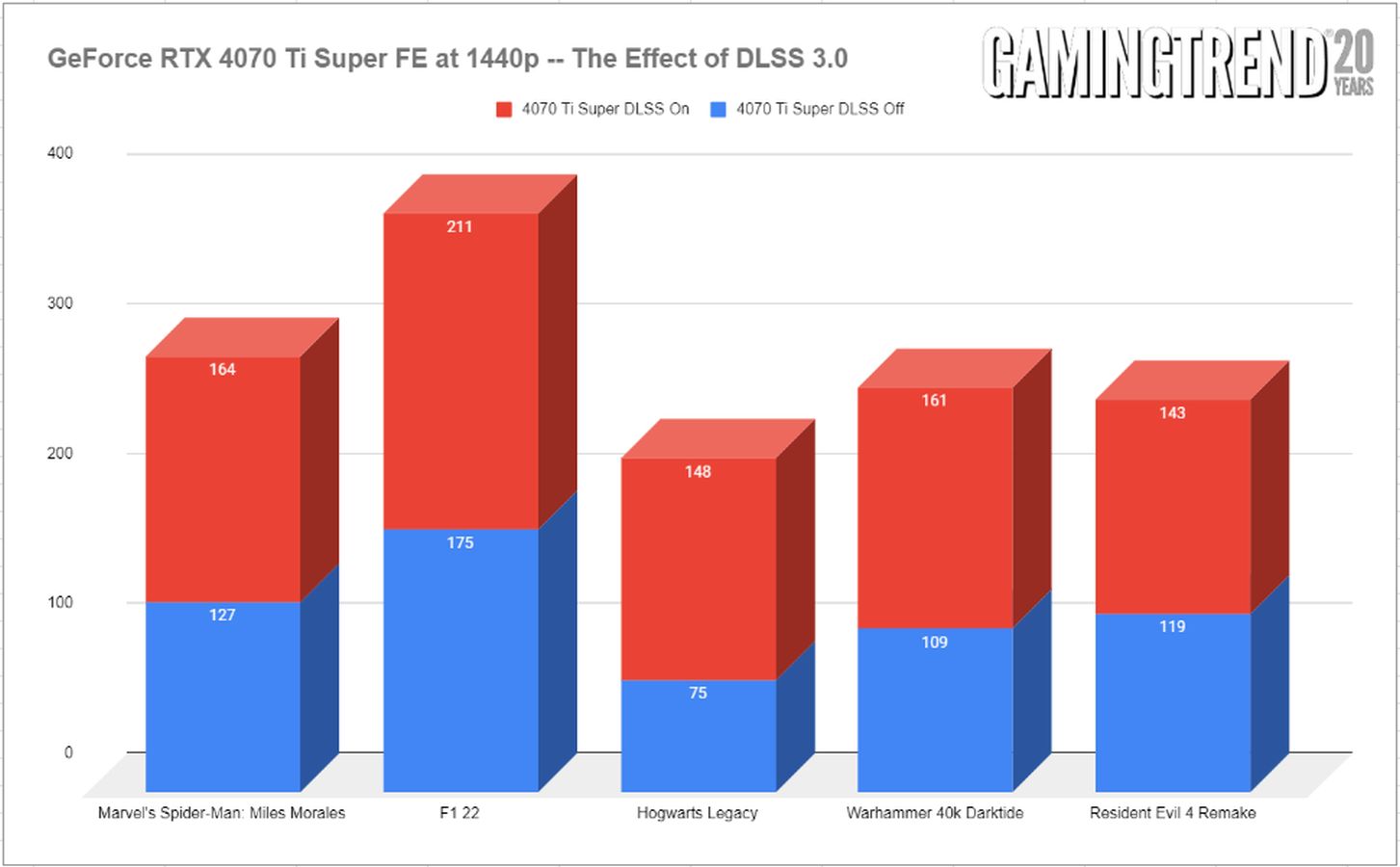

To reiterate my point around DLSS 3, I sliced the data a different way visually, as I have with other 4000 series card reviews. A stacked graph shows just how much heavy lifting AI upsampling is doing. At the price of the odd wobble of a UI element, I’ll take it.

Pricing:

While the pricing news isn’t quite as sweet with the GeForce RTX 4070 Super, the wider memory bandwidth, extra RUs, additional memory, and a general shift more towards the higher end cards is going to command a higher price. The Ada Lovelace advances in rendering technology (The AV1 encoder is over 40% more efficient than H.264), double the Ray Tracing power, Frame Generation, and somehow even less of a power footprint, just to scratch the surface has pushed the MSRP to $799. Some third party providers have pushed their cards to somewhere between $849 to $1199, though to be frank I’ve not seen anything to justify the additional cost. I’ve asserted that it’s unwise to pick up the previous generation of cards as you lose out on warranty and all of the 4000 series Frame Gen power. Here it’s a tougher ask, but still at a justifiable price for the additional power.