If you had to guess what videocard tops the charts for Steam users, would your answer be the GeForce GTX 1060? Amazingly, that’s precisely what you’ll find in this link. A true workhorse, the GTX 1060 was a fantastic balance of power and performance. NVIDIA is looking to see if they can make lightning strike twice with a fresh entry into the mainstream consumer space that would bring the power of Ray Tracing, Reflex, Broadcast, DLSS, and AI to the entry market? Today we crack open the GeForce RTX 3060 XC from EVGA to find out.

The first thing I noticed when I installed the RTX 3060 is that it is downright compact. This will easily fit in a small form factor case. In fact, it measures just 9.5” in length, and 4.4” in width (or height if you mount it vertically). It’s still a two-slot card, but for all of its power, this isn’t going to bend your PCI-e slot. Different manufacturers will modify and adjust the device to make it their own, but, generally speaking, this card will fit in just about anything.

The 3000 series cards are the first to include HDMI 2.1 support, allowing for 4K output at 120Hz, and the RTX 3060 is no exception. This card has one HDMI 2.1 port, as well as three DisplayPort 1.4a ports. I have personally driven three monitors and a TV off of the RTX 3060, and all without a hitch, so it should serve just about any need.

Cooling:

One of the best innovations NVIDIA brought to the market was their revolutionary pull-through air flow technology. It serves as the foundation for all of their 3000-series cards, compensating for the higher TDP (Thermal Design Power, which is the maximum “real world” subsystem power draw, and how much heat can be dissipated by the cooling solution) of these new cards. That said, this design is all but exclusive to the reference design, better known as the “Founder’s Edition” versions of the boards. Today we are looking at EVGA’s GeForce RTX XC 3060, which appears to have a traditional block and fan method. Upon closer inspection, however, there is more than meets the eye. The finned construction runs the entire length of the card, with two angled fans to help pull heat through an approximately 1” by 2” cutout through the PCB, letting air flow through the device. In practice, and even at max load for over 8 hours, I never saw the temperature rise above 79 degrees Celsius — exactly the same as the RTX 3060 Ti FE. That could be a testament to the cooling solution I use, but the full-length finned pull-through cooling seems to be working precisely as designed, even if EVGA opted for a more basic industrial design than NVIDIA’s reference.

In the RTX 2060 we saw a TDP of 160 Watts, with the RTX 3060 coming in just above that at 170 Watts. As such, a 450W power supply is the recommendation, again making it perfect for small form factor chassis which may have a smaller form factor PSU to go with it. Connecting to power on the EVGA RTX 3060 means using a standard 8-pin connector — there is not a 12-pin power connector like we’ve seen in their other 3000-series cards. It’s obviously not all that important, but something worth noting if you were expecting the adapter we’ve seen so frequently this generation.

Jargon — Let’s decipher what’s under the hood:

Just like in our 3080, 3070, and 3060 Ti reviews, I want to cover some jargon to ensure you have a clear picture of the hardware under the hood in the GeForce RTX 3060. Just like in the 3070 and 3060 Ti, the GeForce RTX 3060 uses GDDR6 instead of the GDDR6-X. Surprisingly, however, it increases the RAM from 10GB in the 3060 Ti to 12GB of available VRAM. Much like the 3060 Ti, the 3060 is aimed squarely at the 1440p and 1080p space, but with a little more leg room.

The more pixels your graphics card pushes, the more VRAM you need. Features like Ray Tracing and anti-aliasing work the card hard, as do higher refresh rates and resolutions. Refresh rates, put simply, is the redrawing of the scene however many times per second (e.g. 60 times a second versus 30 times a second), and doing that at 4K resolution versus 1080p means drawing four times the pixels. Let’s talk about the processor in this card before we dig into the numbers.

The GeForce RTX 3080 runs on the GA102 processor, sporting over 28 million transistors with a shockingly small die size of 628 mm² with 10752 shading units, 336 texture mapping units, 112 render output units, 336 tensor cores, and 84 ray tracing acceleration cores. That GA102 GPU is what powers the RTX 3080 and RTX 3090, and it’s truly bleeding edge. The RTX 3070 and RTX 3060 Ti use a GA104 GPU which is a larger processor using a 392 mm² with 17,400 million transistors. The RTX 3060 uses a new processor — the GA106. The GA106 uses a more “average” size die at 276 mm² and 13,250 million transistors, sporting 48 render output units, 152 Tensor cores, and 38 ray tracing acceleration cores. All that’s gee-whiz interesting if you are a tech dork like me, but let’s talk about performance and what these components do for gaming, shall we?

The GeForce RTX 3060 Ti (at $399 retail) shipped with a base clock speed of 1410 MHz, with a boost clock of 1665 MHz. The RTX 3060 comes in slightly lower at a clock speed of 1320 MHz, but surprisingly a higher boost clock of 1777 MHz. Similarly, the Ti version had a memory clock of 1750 MHz, with an effective throughput of roughly 14 Gbps. Surprisingly, the 3060 again comes in with a slightly higher memory clock at 1875 MHz, granting it a full 15 Gbps of throughput. This is likely to compensate for a slightly narrower memory interface, but more on that in a moment. First, let’s unpack the rest of the specialty tech under the hood.

What is an RT Core?

Arguably one of the most misunderstood aspects of the RTX series is the RT core. This core is a dedicated pipeline to the streaming multiprocessor (SM) where light rays and triangle intersections are calculated. Put simply, it’s the math unit that makes realtime lighting work and look its best. Multiple SMs and RT cores work together to interleave the instructions, processing them concurrently, allowing the processing of a multitude of light sources intersecting with the objects in the environment in multiple ways, all at the same time. In practical terms, it means a team of graphical artists and designers don’t have to “hand-place” lighting and shadows, and then adjust the scene based on light intersection and intensity — with RTX, they can simply place the light source in the scene and let the card do the work. I’m oversimplifying it, for sure, but that’s the general idea.

The Turing architecture cards (the 20X0 series) were the first implementations of this dedicated RT core technology. The 2080 Ti had 72 RT cores, delivering 29.9 Teraflops of throughput, whereas the RTX 3080 has 68 2nd-gen RT cores with 2x the throughput of the Turing-based cards, delivering 58 Teraflops of RTX power, and the 3070 shipped with 47 of them to dish out 40 Teraflops of shadow-processing, real-time ray tracing, shading, and compute power. The RTX 3060 Ti has 38 RT Cores, providing 32.4 Teraflops of power. The RTX 3060 ships with 28 RT 2nd Generation RT cores, granting roughly 25 Teraflops for all of the graphical bells and whistles that are now becoming common in games.

What is a Tensor Core?

Here’s another example of “but why do I need it?” within GPU architecture — the Tensor Core. This relatively new technology from NVIDIA had seen wider use in high performance supercomputing or data centers before finally arriving on consumer-focused cards in the latter and more powerful 20X0 series cards. Now, with the RTX 30X0 series of cards we have the third generation of these processors. The 2080 Ti had 240 second-gen cores, the 3080 has 272 third-gen Tensor cores, the 3070 comes with 184 of them, the RTX 3060 Ti has 152, with the RTX 3060 coming in at 112. For basis of comparison, the RTX 2060 had 120, but this third generation of Tensor cores are better in every way, so don’t fall into the trap that more is better. So, what do they do?

Tensor cores are used for AI-driven learning, and we see this more directly applied to gaming via DLSS, or Deep Learning Super Sampling. More than marketing buzzwords, DLSS can take a few frames, analyze them, and then generate a “perfect frame” by interpreting the results using AI, with help from supercomputers back at NVIDIA HQ. The second pass through the process uses what it learned about aliasing in the first pass and then “fills in” what it believes to be more accurate pixels, resulting in a cleaner image that can be rendered even faster. Amazingly, the results can actually be cleaner than the original image, especially at lower resolutions, and having less to process means more frames can be rendered using the power saved. It’s literally free frames. DLSS 3.0 is still swirling in the wind, but very soon we may see this applied more broadly than it is today. We’ll have to keep our eyes peeled for that one, but when it does release, these Tensor cores are the components to do the work. That’s all fancy, but wouldn’t you rather see it in action? Here’s a quick snippet from Control that does exactly that.

DLSS 2.0 was introduced in March of 2020, and it took the principals of DLSS and set out to resolve the complaints users and developers had, while improving the speed. To that end, they reengineered the Tensor core pipeline, effectively doubling the speed while still maintaining the image quality of the original, or even sharpening it to the point where it looks better than the source! For the user community, NVIDIA exposed the controls to DLSS, providing three modes to choose from — Performance (for maximum framerate), Balanced, and Quality (which looks to deliver the best quality final resultant image). Developers saw the biggest boon with DLSS 2.0 as they were given a universal AI training network. Instead of having to train each game and each frame, DLSS 2.0 uses a library of non-game-specific parameters to improve graphics and performance, meaning that the technology could be applied to any game should the developer choose to do so. Game development cycles being what they are, and with the tech only hitting the street earlier this year, it’s likely we’ll see more use of DLSS 2.0 as we head towards Virtual E3 2021, and even more at the end of the year.

Memory Interface Width:

The biggest difference for the 3060 versus the 3060 Ti is the memory interface. The 3060 Ti has a 256-bit interface, whereas the 3060 comes with a 192-bit interface. Essentially, this narrows the memory pipeline to carry data from the GPU to the CPU. It’s likely the reason why the clock rates are slightly higher — to compensate for fewer cores and a little less interface width. That said, compared to the 1060 or even the 2060, the RTX 3060’s bandwidth is in a category unto itself.

The RTX 3060 Ti has a memory clock of 1750 MHz, with a 14 Gbps effective throughput. It also has 96 raster operation pipelines (ROPs), which helps perform anti-aliasing and provides the adjusted image to the framebuffer. The 3060 Ti slims that to 80, with the RTX 3060 slimming it further to 48. TMUs are Texture Mapping Units and they manipulate the bitmap image and place it in the environment, and the 3060 Ti has 152 of them. The RTX 3060 has 112 of them. Finally, the 3060 Ti has 4864 cores for processing all of the graphics and features, 3060 slims back to 3584. That’s a lot of numbers, but what does it all add up to in the end? Let’s dig into the rest of the tech and some benchmarks to better answer that question.

Gaming Benchmarks:

If your eyes are rolling into the back of your head with all this technical jargon, you aren’t alone. Let’s get into the benchmarks, but let me TLDR for those of you who wanted me to cut to the chase about 20 paragraphs ago — the RTX 3060 is the power equivalent of an RTX 2070, if not slightly better. It easily dusts the RTX 1060, or any 10-series card including the mighty 1080 Ti. It can keep up with a great many 20-series cards, and makes one thing very clear — while it can’t keep up with it’s bigger Ti brother, this card is punching above its weight. Let’s get into the numbers.

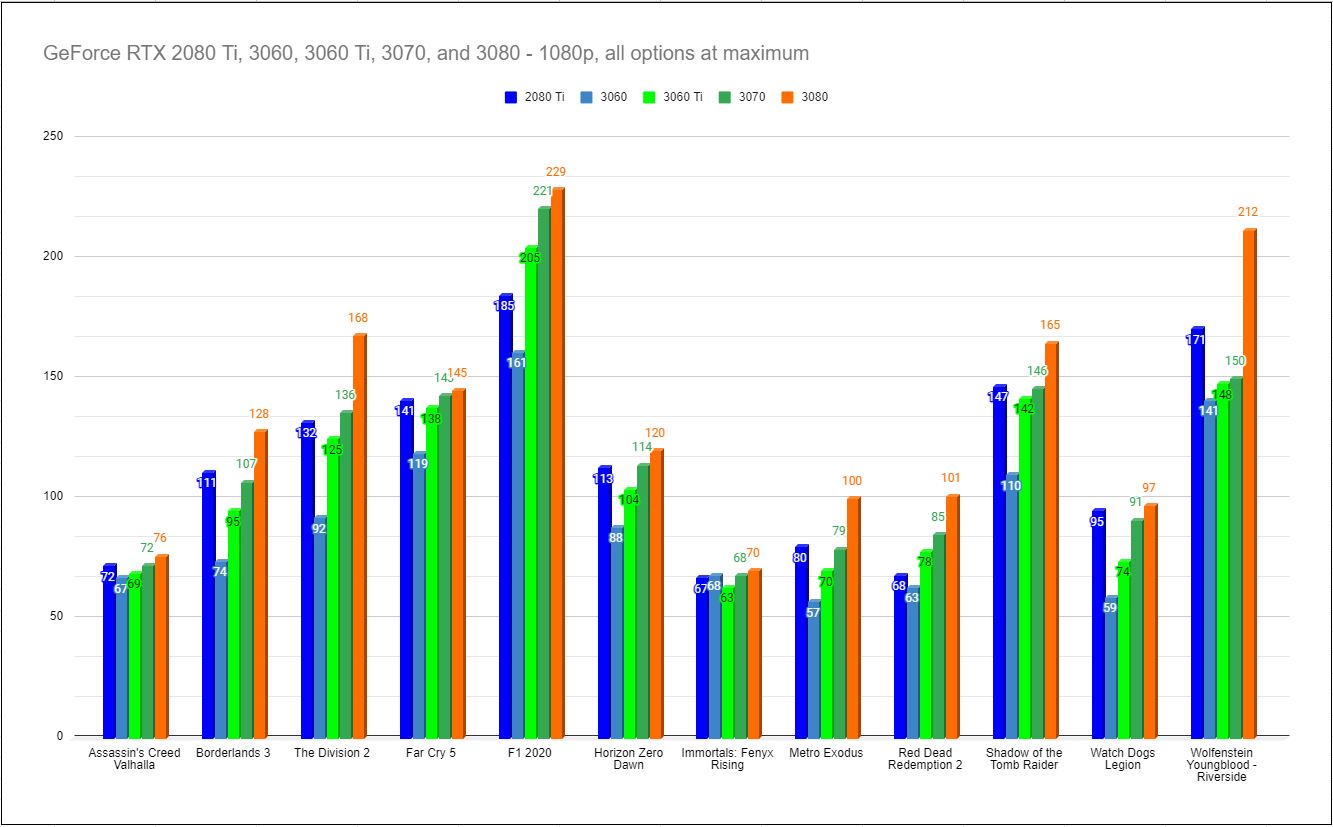

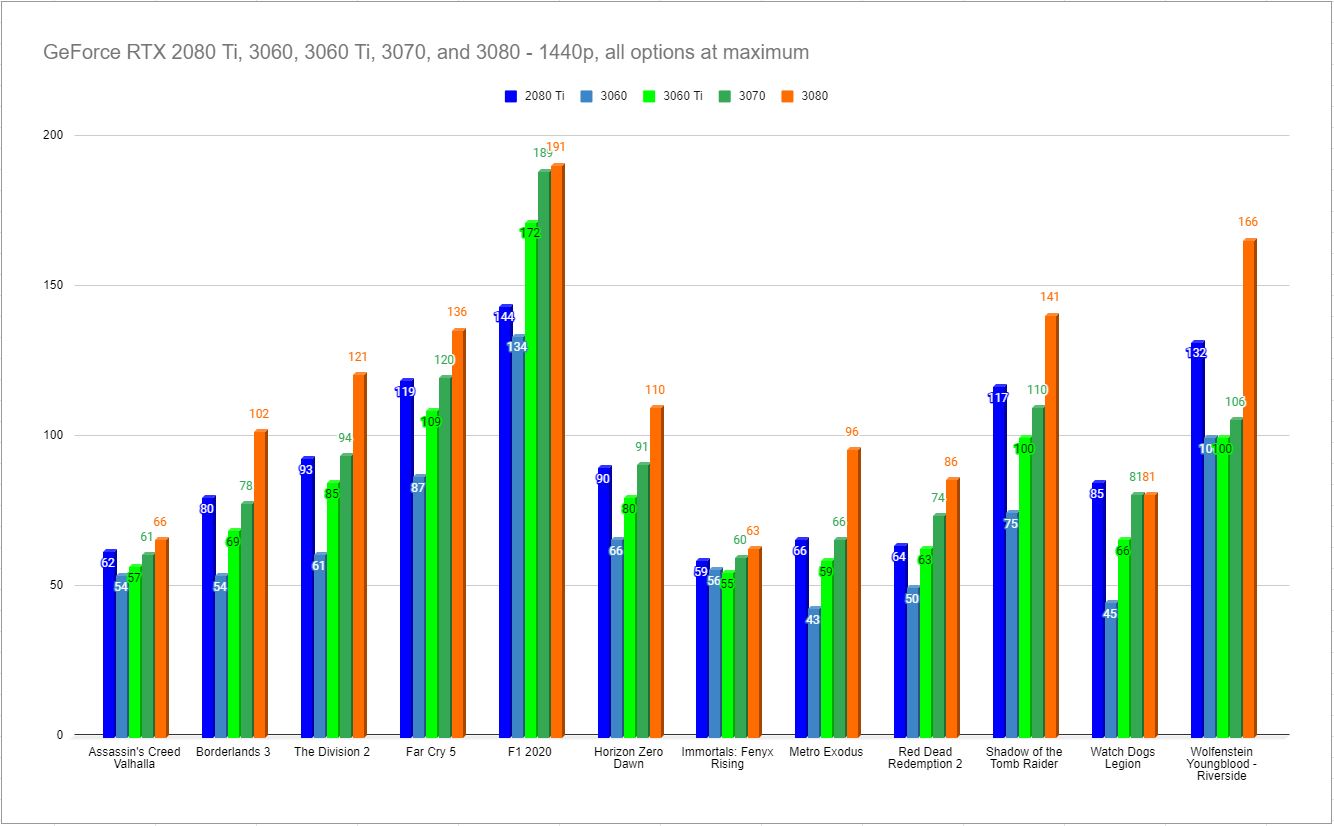

Right out of the gate, it’s very clear that, much like the RTX 3060 Ti, the 3060 is very much aimed at running all games at 60fps or better at 1080p — where the vast majority of people live. We see that borne out by the numbers which easily reflect that, even on current AAA games with all of the bells and whistles lit up. While it can’t keep up with the RTX 3060 Ti, that Ti will cost you an additional $80 dollars.

Frankly, I was surprised by how well the RTX 3060 performs at 1440p. Again, with all options cranked to the maximum, even games like Red Dead Redemption 2 can still hit a respectable 50fps, with Horizon Zero Dawn hitting 66fps.

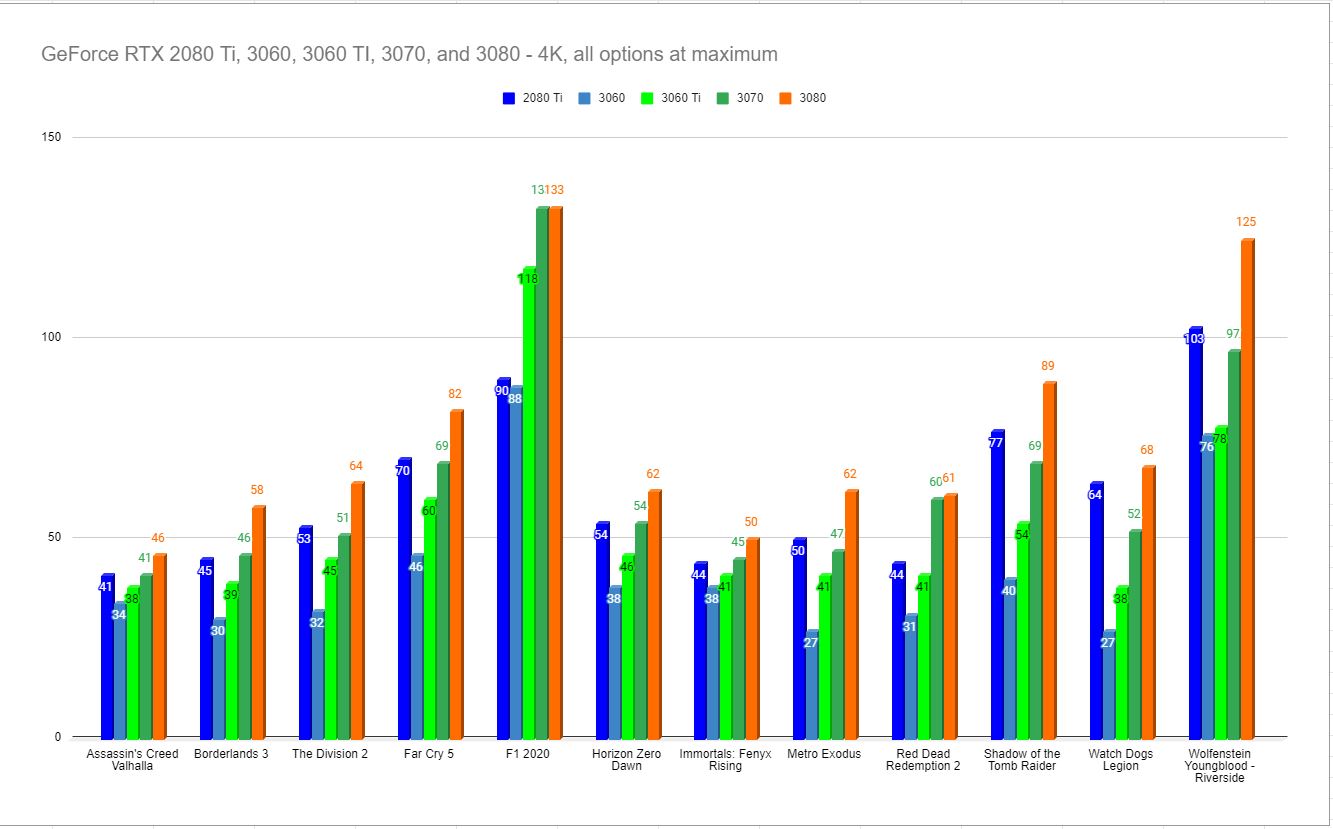

It should be very clear that the RTX 3060 Ti and 3060 are not aimed at the 4K market, but what’s surprising is that the wheels didn’t fall off. Turning in some respectable numbers, the 3060 is still able to pump out 31fps for Red Dead Redemption 2, and 40 for Shadow of the Tomb Raider. In fact, it stays above the 30 mark on just about everything — something unexpected for what can easily be classified as a “value” card.

Analysis:

There are a lot of interesting bits of information to glean from these benchmarks. Sure the standouts are the big number jumps, but it also indicates something subtle as well. Games that take advantage of new lighting like RTX instead of performing complex calculations for lighting and shadows see a sizable boost over their contemporaries without that tech. By way of example, Assassin’s Creed Odyssey is notoriously CPU-bound, and since it runs on the Anvil engine, so is Assassin’s Creed: Valhalla, so throwing more hardware at it will only go so far. If the game were to take advantage of tech like RTX, we could see that constraint removed, likely granting huge gains.

It’s important to note that many of the games on our current benchmark list are CPU-bound. The interchange between the CPU, memory, GPU, and storage medium create several bottlenecks that can interfere with your framerate. If your graphics card can ingest gigs of information every second, but your crummy mechanical hard drive struggles to hit 133 MB/s, you’ve got a problem. If you are using high-speed Gen3 or 4 m.2 SSD storage that drive can hit 3 or even 4 GB/s, but if your older processor isn’t capable of processing it, it’s not going to be able to fill your GPU either. Supposing you’ve got a shiny new 10th Gen Intel CPU, you may be surprised that it also may not be fast enough for what’s under the hood of this RTX 3070 or RTX 3080, and we see some of that phenomenon in the benchmarks above. That said, if you aren’t ready to upgrade your entire system, the RTX 3060 might just extend the life of your existing rig.

Speaking plainly, the only real thing I can say I don’t like about the RTX 3060 is the tighter memory interface. The drops in performance at higher resolutions are obvious, and we don’t see those with the RTX 3060 Ti as much. Compensating with DLSS 2.0 is more than possible, so perhaps it won’t be as big a deal, especially with NVIDIA bringing massive framerate upgrades to all sorts of games recently. Time will tell.

Price to Performance:

With the PlayStation 5 and Xbox Series X available now, there have been a non-stop barrage of advertisements around 4K gaming and how both platforms can deliver 120fps. What we’ve seen from the launch games is that very few games are capable of delivering on that promise when combined. Most games push the resolution down to 1080p to hit 120fps, though there are some absolutely gorgeous standouts like Ori and the Will of the Wisps that indeed hit that 4K/120 target. We’ll have to see what the future holds as to whether developers are able to deliver on that promise with more time with the new hardware. Why am I talking about consoles in a video card review? Well, they have a few things in common you should think about before you decide which card to purchase.

With buying a PlayStation 5, for example, you’ll want to think about the other components in your setup. Perhaps you have a TV that is capable of 4K, but can it handle 120Hz refresh rates, or has the manufacturer ticked this box by filling every other frame with a black frame so they can tick that box? Similarly, does your receiver support these resolutions and framerates, or will you be forced to skip your excellent surround sound system because your sound system would choke it back to 60Hz? The same applies to your PC. Yes, the 3060 supports HDMI 2.1, and yes it can deliver up to 8K output in theory, but you can see from the benchmarks that only the most optimized games are punching above 60 on this card. That said, with DLSS enabled, we are seeing incredible gains in games like Call of Duty: Black Ops Cold War, so perhaps 120+ is possible, but I have my doubts. If you need those sorts of framerates and resolutions, save your pennies and move up a weight class.

There are no bones about it, cards like the Titan, the 2080 Ti, and the 3090 are expensive. They represent the approach of not worrying about whether they should and simply whether they could. I love that kind of crazy as it really pushes the envelope of technology and innovation. NVIDIA held an 81% market share for the GPU market last year, and they easily could have sat back and iterated on the 2080’s power and delivered a lower cost version with a few new bells and whistles attached. That’s not what they did. They owned the field and still came in with a brand new card that blew their own previous models out of the water. The GeForce RTX 3070 has more power than the 2080 Ti and it costs $500 versus the $1200+ you’ll fork out to get your hands on the previous generation’s king. Similarly, the RTX 3080 eclipses everything on the market, even their Titan RTX, and at $699 it does so in a fashion that beats it and takes its lunch money. The RTX 3060 Ti slips into a sweet spot at $399, but the RTX 3060 is just $329 at launch. NVIDIA is once again ahead of the power curve on their highest end flagship cards, but the RTX 3060 fills a spot for those who get itchy at the prospect of spending more than $400 on a single component. Put plainly, we haven’t seen a generational leap like this, maybe ever. The fact that NVIDIA priced it the way they did makes me think they had a reason, and I don’t think that reason is AMD.

Sure, I’m certain the green team is worried about how the new generation of consoles could impact their market, but as someone who has worked in tech for a very long time, there can be another reason. When you go to a theme park, there are signs that say “You must be this tall to ride this ride,” they are there for your safety. But safety is rarely fun or exciting. We’ve been supporting old technology like mechanical hard drives and outdated APIs for a very long time. Windows 10 came out five years ago, but there are still plenty of folks who want to use Windows 7. Not pushing the envelope stifles innovation, and it stops us from realizing the things we could achieve. By occasionally raising that “this tall” bar to introduce a new day and a new way, we send a message to consumers that it’s time to upgrade, and we send a strong signal to developers that they can push their own envelopes. That’s how we get games like Cyberpunk 2077 (visually speaking, anyway), and it’s how we see lighting like we do in Watch Dogs: Legion. It’s what takes us from this, to this. There’s no better time to embrace the future than right now, and at a price to performance value that has seemed impossible, the RTX 3060 continues to buck the pricing trends of the past. With power exceeding the GeForce RTX 2060, and matching the 2070, a card that debuted at $529, the RTX 3060 provides incredible power to price ratio.